![]()

1

Introduction

Until the 1960s, most research on model identification and control system design was concentrated on continuous-time (or analogue) systems represented by a set of linear differential equations. Subsequently, major developments in discrete-time model identification, coupled with the extraordinary rise in importance of the digital computer, led to an explosion of research on discrete-time, sampled data systems. In this case, a ‘real-world’ continuous-time system is controlled or ‘regulated’ using a digital computer, by sampling the continuous-time output, normally at regular sampling intervals, in order to obtain a discrete-time signal for sampled data analysis, modelling and Direct Digital Control (DDC). While adaptive control systems, based directly on such discrete-time models, are now relatively common, many practical control systems still rely on the ubiquitous ‘two-term’, Proportional-Integral (PI) or ‘three-term’, Proportional-Integral-Derivative (PID) controllers, with their predominantly continuous-time heritage. And when such systems, or their more complex relatives, are designed offline, rather than ‘tuned’ online, the design procedure is often based on traditional continuous-time concepts. The resultant control algorithm is then, rather artificially, ‘digitised’ into an approximate digital form prior to implementation.

But does this ‘hybrid’ approach to control system design really make sense? Would it not be both more intellectually satisfying and practically advantageous to evolve a unified, truly digital approach, which would allow for the full exploitation of discrete-time theory and digital implementation? In this book, we promote such a philosophy, which we term True Digital Control (TDC), following from our initial development of the concept in the early 1990s (e.g. Young et al. 1991), as well as its further development and application (e.g. Taylor et al. 1996a) since then. TDC encompasses the entire design process, from data collection, data-based model identification and parameter estimation, through to control system design, robustness evaluation and implementation. The TDC approach rejects the idea that a digital control system should be initially designed in continuous-time terms. Rather it suggests that the control systems analyst should consider the design from a digital, sampled-data standpoint throughout. Of course this does not mean that a continuous-time model plays no part in TDC design. We believe that an underlying and often physically meaningful continuous-time model should still play a part in the TDC system synthesis. The designer needs to be assured that the discrete-time model provides a relevant description of the continuous-time system dynamics and that the sampling interval is appropriate for control system design purposes. For this reason, the TDC design procedure includes the data-based identification and estimation of continuous-time models.

One of the key methodological tools for TDC system design is the idea of a Non-Minimal State Space (NMSS) form. Indeed, throughout this book, the NMSS concept is utilised as a unifying framework for generalised digital control system design, with the associated Proportional-Integral-Plus (PIP) control structure providing the basis for the implementation of the designs that emanate from NMSS models. The generic foundations of linear state space control theory that are laid down in early chapters, with NMSS design as the central worked example, are utilised subsequently to provide a wide ranging introduction to other selected topics in modern control theory.

We also consider the subject of stochastic system identification, i.e. the estimation of control models suitable for NMSS design from noisy measured input–output data. Although the coverage of both system identification and control design in this unified manner is rather unusual in a book such as this, we feel it is essential in order to fully satisfy the TDC design philosophy, as outlined later in this chapter. Furthermore, there are valuable connections between these disciplines: for example, in identifying a parametrically efficient (or parsimonious) ‘dominant mode’ model of the kind required for control system design; and in quantifying the uncertainty associated with the estimated model for use in closed-loop stochastic uncertainty and sensitivity analysis, based on procedures such as Monte Carlo Simulation (MCS) analysis.

This introductory chapter reviews some of the standard terminology and concepts in automatic control, as well as the historical context in which the TDC methodology described in the present book was developed. Naturally, subjects of particular importance to TDC design are considered in much more detail later and the main aim here is to provide the reader with a selective and necessarily brief overview of the control engineering discipline (sections 1.1 and 1.2), before introducing some of the basic concepts behind the NMSS form (section 1.3) and TDC design (section 1.4). This is followed by an outline of the book (section 1.5) and concluding remarks (section 1.6).

1.1 Control Engineering and Control Theory

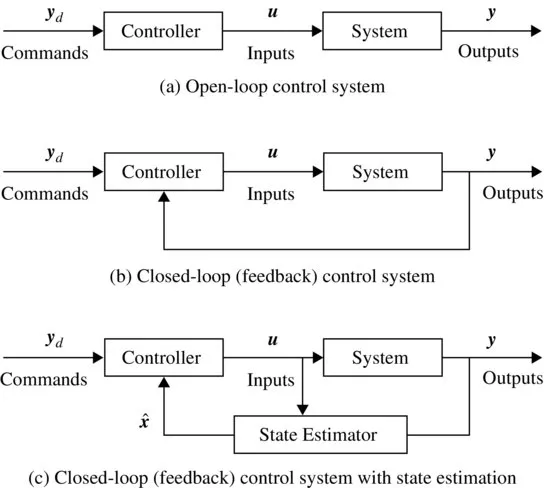

Control engineering is the science of altering the dynamic behaviour of a physical process in some desired way (Franklin et al. 2006). The scale of the process (or system) in question may vary from a single component, such as a mass flow valve, through to an industrial plant or a power station. Modern examples include aircraft flight control systems, car engine management systems, autonomous robots and even the design of strategies to control carbon emissions into the atmosphere. The control systems shown in Figure 1.1 highlight essential terminology and will be referred to over the following few pages.

This book considers the development of digital systems that control the output variables of a system, denoted by a vector

y in

Figure 1.1, which are typically positions or levels, velocities, pressures, torques, temperatures, concentrations, flow rates and other measured variables. This is achieved by the design of an online control algorithm (i.e. a set of rules or mathematical equations) that updates the control input variables, denoted by a vector

in

Figure 1.1, automatically and without human intervention, in order to achieve some defined control objectives. These control inputs are so named because they can directly change the behaviour of the system. Indeed, for modelling purposes, the engineering system under study is

defined by these input and output variables, and the assumed causal dynamic relationships between them. In practice, the control inputs usually represent a source of energy in the form of electric current, hydraulic fluid or pneumatic pressure, and so on. In the case of an aircraft, for example, the control inputs will lead to movement of the ailerons, elevators and fin, in order to manipulate the attitude of the aircraft during its flight mission. Finally, the command input variables, denoted by a vector

yd in

Figure 1.1, define the problem dependent ‘desired’ behaviour of the system: namely, the nature of the short term pitch, roll and yaw of an aircraft in the local reference frame; and its longer term behaviour, such as the gradual descent of an aircraft onto the runway, represented by a time-varying altitude trajectory.

Control engineers design the ‘Controller’ in Figure 1.1 on the basis of control system design theory. This is normally concerned with the mathematical analysis of dynamical systems using various analytical techniques, often including some form of optimisation over time. In this latter context, there is a close connection between control theory and the mathematical discipline of optimisation. In general terms, the elements needed to define a control optimisation problem are knowledge of: (i) the dynamics of the process; (ii) the system variables that are observable at a given time; and (iii) an optimisation criterion of some type.

A well-known general approach to the optimal control of dynamic systems is ‘dynamic programming’ evolved by Richard Bellman (1957). The solution of the associated Hamilton–Jacobi–Bellman equation is often very difficult or impossible for nonlinear systems but it is feasible in the case of linear systems optimised in relation to quadratic cost functions with quadratic constraints (see later and Appendix A, section A.9), where the solution is a ‘linear feedback control’ law (see e.g. Bryson and Ho 1969). The best-known approaches of this type are the Linear-Quadratic (LQ) method for deterministic systems; and the Linear-Quadratic-Gaussian (LQG) method for uncertain stochastic systems affected by noise. Here the system relations are linear, the cost is quadratic and the noise affecting the system is assumed to have a Gaussian, ‘normal’ amplitude distribution. LQ and LQG optimal feedback control are particularly important because they have a complete and rigorous theoretical background, while at the same time introduce key concepts in control, such as ‘feedback’, ‘controllability’, ‘observability’ and ‘stability’ (see later).

Figure 1.1b and Figure 1.1c sh...