![]()

Chapter 1

Bayesian Inference and Markov Chain Monte Carlo

1.1 Bayes

Bayesian inference is a probabilistic inferential method. In the last two decades, it has become more popular than ever due to affordable computing power and recent advances in Markov chain Monte Carlo (MCMC) methods for approximating high dimensional integrals.

Bayesian inference can be traced back to Thomas Bayes (1764), who derived the inverse probability of the success probability θ in a sequence of independent Bernoulli trials, where θ was taken from the uniform distribution on the unit interval (0, 1) but treated as unobserved. For later reference, we describe his experiment using familiar modern terminology as follows.

Example 1.1 The Bernoulli (or Binomial) Model With Known Prior Suppose that

θ ~ Unif(0, 1), the uniform distribution over the unit interval (0, 1), and that

x1,...,

xn is a sample from Bernoulli(

θ), which has the sample space

= {0, 1} and probability mass function (pmf)

where

X denotes the Bernoulli random variable (r.v.) with

X = 1 for

success and

X =0 for

failure. Write

the observed number of successes in the

n Bernoulli trials. Then

N|θ ~ Binomial(

n, θ), the Binomial distribution with parameters size

n and probability of success

θ.

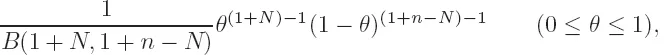

The inverse probability of θ given x1,..., xn, known as the posterior distribution, is obtained from Bayes’ theorem, or more rigorously in modern probability theory, the definition of conditional distribution, as the Beta distribution Beta(1 + N, 1+n − N) with probability density function (pdf)

where B(·, ·) stands for the Beta function.

1.1.1 Specification of Bayesian Models

Real world problems in statistical inference involve the unknown quantity θ and observed data X. For different views on the philosophical foundations of Bayesian approach, see Savage (1967a, b), Berger (1985), Rubin (1984), and Bernardo and Smith (1994). As far as the mathematical description of a Bayesian model is concerned, Bayesian data analysis amounts to

(i) specifying a sampling model for the observed data X, conditioned on an unknown quantity θ,

where f(X|θ) stands for either pdf or pmf as appropriate, and

(ii) specifying a marginal distribution π(θ) for θ, called the prior distribution or simply the prior for short,

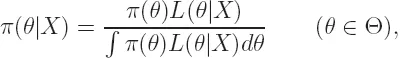

Technically, data analysis for producing inferential results on assertions of interest is reduced to computing integrals with respect to the posterior distribution, or posterior for short,

where L(θ|X) ∝ f(X|θ) in θ, called the likelihood of θ given X. Our focus in this book is on efficient and accurate approximations to these integrals for scientific inference. Thus, limited discussion of Bayesian inference is necessary.

1.1.2 The Jeffreys Priors and Beyond

By its nature, Bayesian inference is necessarily subjective because specification of the full Bayesian model amounts to practically summarizing available information in terms of precise probabilities. Specification of prob...