We will now offer a high-level view of why DL is important and how it fits into the discussion about AI. Then, we will look at the historical development of DL, as well as current and future applications.

So, who are you, dear reader? Why are you interested in DL? Do you have your private vision for AI? Or do you have something more modest? What is your origin story?

In our survey of colleagues, teachers, and meetup acquaintances, the origin story of someone with a more formal interest in machines has a few common features. It doesn't matter much if you grew up playing games against the computer, an invisible enemy who sometimes glitched out, or if you chased down actual bots in id Software's Quake back in the late 1990s; the idea of some combination of software and hardware thinking and acting independently had an impact on each of us early on in life.

And then, as time passed, with age, education, and exposure to pop culture, your ideas grew refined and maybe you ended up as a researcher, engineer, hacker, or hobbyist, and now you're wondering how you might participate in booting up the grand machine.

If your interests are more modest, say you are a data scientist looking to understand cutting-edge techniques, but are ambivalent about all of this talk of sentient software and science fiction, you are, in many ways, better prepared for the realities of ML in 2019 than most. Each of us, regardless of the scale of our ambition, must understand the logic of code and hard work through trial and error. Thankfully, we have very fast graphics cards.

And what is the result of these basic truths? Right now, in 2019, DL has had an impact on our lives in numerous ways. Hard problems are being solved. Some trivial, some not. Yes, Netflix has a model of your most embarrassing movie preferences, but Facebook has automatic image annotation for the visually impaired. Understanding the potential impact of DL is as simple as watching the expression of joy on the face of someone who has just seen a photo of a loved one for the first time.

![]()

We will now briefly cover the history of DL and the historical context from which it emerged, including the following:

- The idea of AI

- The beginnings of computer science/information theory

- Current academic work about the state/future of DL systems

While we are specifically interested in DL, the field didn't emerge out of nothing. It is a group of models/algorithms within ML itself, a branch of computer science. It forms one approach to AI. The other, so-called symbolic AI, revolves around hand-crafted (rather than learned) features and rules written in code, rather than a weighted model that contains patterns extracted from data algorithmically.

The idea of thinking machines, before becoming a science, was very much a fiction that began in antiquity. The Greek god of arms manufacturing, Hephaestus, built automatons out of gold and silver. They served his whims and are an early example of human imagination naturally considering what it might take to replicate an embodied form of itself.

Bringing the history forward a few thousand years, there are several key figures in 20th-century information theory and computer science that built the platform that allowed the development of AI as a distinct field, including the recent work in DL we will be covering.

The first major figure, Claude Shannon, offered us a general theory of communication. Specifically, he described, in his landmark paper, A Mathematical Theory of Computation, how to ensure against information loss when transmitting over an imperfect medium (like, say, using vacuum tubes to perform computation). This notion, particularly his noisy-channel coding theorem, proved crucial for handling arbitrarily large quantities of data and algorithms reliably, without the errors of the medium itself being introduced into the communications channel.

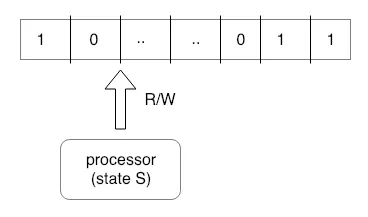

Alan Turing described his Turing machine in 1936, offering us a universal model of computation. With the fundamental building blocks he described, he defined the limits of what a machine might compute. He was influenced by John Von Neumann's idea of the stored-program. The key insight from Turing's work is that digital computers can simulate any process of formal reasoning (the Church-Turing hypothesis). The following diagram shows the Turing machine process:

So, you mean to tell us, Mr. Turing, that computers might be made to reason…like us?!

John Von Neumann was himself influenced by Turing's 1936 paper. Before the development of the transistor, when vacuum tubes were the only means of computation available (in systems such as ENIAC and its derivatives), John Von Neumann published his final work. It remained incomplete at his death and is entitled The Computer and the Brain. Despite remaining incomplete, it gave early co...