![]()

1 | Mission-Critical Cloud Computing for Critical Infrastructures |

| Thoshitha Gamage, David Anderson, David Bakken, Kenneth Birman, Anjan Bose, Carl Hauser, Ketan Maheshwari, and Robbert van Renesse |

CONTENTS

1.1 Introduction

1.1.1 Cloud Computing

1.1.2 Advanced Power Grid

1.2 Cloud Computing’s Role in the Advanced Power Grid

1.2.1 Berkeley Grand Challenges and the Power Grid

1.3 Model for Cloud-Based Power Grid Applications

1.4 GridCloud: A Capability Demonstration Case Study

1.4.1 GridStat

1.4.2 Isis2

1.4.3 TCP-R

1.4.4 GridSim

1.4.5 GridCloud Architecture

1.5 Conclusions

References

1.1 INTRODUCTION

The term cloud is becoming prevalent in nearly every facet of day-to-day life, bringing up an imperative research question: how can the cloud improve future critical infrastructures? Certainly, cloud computing has already made a huge impact on the computing landscape and has permanently incorporated itself into almost all sectors of industry. The same, however, cannot be said of critical infrastructures. Most notably, the power industry has been very cautious regarding cloud-based computing capabilities.

This is not a total surprise: the power industry is notoriously conservative about changing its operating model, and its rate commissions are generally focused on short-term goals. With thousands of moving parts, owned and operated by just as many stakeholders, even modest changes are difficult. Furthermore, continuing to operate while incorporating large paradigm shifts is neither a straightforward nor a risk-free process. In addition to industry conservatism, progress is slowed by the lack of comprehensive cloud-based solutions meeting current and future power grid application requirements. Nevertheless, there are numerous opportunities on many fronts—from bulk power generation, through wide-area transmission, to residential distribution, including at the microgrid level—where cloud technologies can bolster power grid operations and improve the grid’s efficiency, security, and reliability.

The impact of cloud computing is best exemplified by the recent boom in e-commerce and online shopping. The cloud has empowered modern customers with outstanding bargaining power in making their purchasing choices by providing up-to-date pricing information on products from a wide array of sources whose computing infrastructure is cost-effective and scalable on demand. For example, not long ago air travelers relied on local travel agents to get the best prices on their reservations. Cloud computing has revolutionized this market, allowing vendors to easily provide customers with web-based reservation services.

In fact, a recent study shows that online travel e-commerce skyrocketed from a mere $30 billion in 2002 to a staggering $103 billion, breaking the $100 billion mark for the first time in the United States in 2012 [1]. A similar phenomenon applies to retail shopping. Nowadays, online retail shops offer a variety of products, ranging from consumer electronics, clothing, books, jewelry, and video games to event tickets, digital media, and lots more at competitive prices. Mainstream online shops such as Amazon, eBay, Etsy, and so on provide customers with an unprecedented global marketplace to both buy and sell items. Almost all major US retail giants, such as Walmart, Macy’s, BestBuy, Target, and so on, have adopted a hybrid sales model, providing online shops to complement the traditional in-store shopping experience. A more recent trend is flash sale sites (Fab, Woot, Deals2Buy, Totsy, MyHabit, etc.), which offer limited-time deals and offers. All in all, retail e-commerce in the United States increased by as much as 15% in 2012, totaling $289 billion. To put this into perspective, the total was $72 billion 10 years earlier. Such rapid growth relied heavily on cloud-based technology to provide the massive computing resources behind online shopping.

1.1.1 CLOUD COMPUTING

What truly characterizes cloud computing is its business model. The cloud provides on-demand access to virtually limitless hardware and software resources meeting the users’ requirements. Furthermore, users only pay for resources they use, based on the time of use and capacity. The National Institute of Standards and Technology (NIST) defines five essential cloud characteristics: on-demand self-service, broad network access, resource pooling, rapid elasticity, and measured service [2].

The computational model of the cloud features two key characteristics—abstraction and virtualization. The cloud provides its end users with well-defined application programming interfaces (APIs) that support requests to a wide range of hardware and software resources. Cloud computing supports various configurations (central processing unit [CPU], memory, platform, input/output [I/O], networking, storage, servers) and capacities (scale) while abstracting resource management (setup, startup, maintenance, etc.), underlying infrastructure technology, physical space, and human labor requirements. The end users see only APIs when they access services on the cloud. For example, users of Dropbox, the popular cloud-based online storage, only need to know that their stored items are accessible through the API; they do not need any knowledge of the underlying infrastructure supporting the service. Furthermore, end users are relieved of owning large computing resources that are often underused. Instead, resources are housed in large data centers as a shared resource pool serving multiple users, thus optimizing their use and amortizing the cost of maintenance. At the same time, end users are unaware of where their resources physically reside, effectively virtualizing the computing resources.

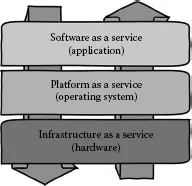

Cloud computing provides three service models: software as a service (SaaS), platform as a service (PaaS), and infrastructure as a service (IaaS). Each of these service models provides unique APIs. Services can be purchased separately, but are typically purchased as a solution stack. The SaaS model offers end-point business applications which are customizable and configurable based on specific needs. One good example is the Google Apps framework, which offers a large suite of end-user applications (email, online storage, streaming channels, domain names, messaging, web hosting, etc.) that individuals, businesses, universities, and other organizations can purchase individually or in combination. Software offered in this manner has a shorter development life cycle, resulting in frequent updates and up-to-date versions. The life-cycle maintenance is explicitly handled by the service provider, who offers the software on a pay-per-use basis. Since the software is hosted in the cloud, there is no explicit installation or maintenance process for the end users in their native environment. Some of the prominent SaaS providers include Salesforce, Google, Microsoft, Intuit, Oracle, and so on (Figure 1.1).

The PaaS model offers a development environment, middleware capabilities, and a deployment stack for application developers to build tailor-made applications or host prepurchased SaaS. Amazon Web Services (AWS), Google App Engine, and Microsoft Azure are a few examples of PaaS. In contrast to SaaS, PaaS does not abstract development life-cycle support, given that most end users in this model are application developers. Nevertheless, the abstraction aspect of cloud computing is still present in PaaS, where developers rely on underlying abstracted features such as infrastructure, operating system, backup and version control features, development and testing tools, runtime environment, workflow management, code security, and collaborative facilities.

FIGURE 1.1 Cloud service models as a stack.

The IaaS model offers the fundamental hardware, networking, and storage capabilities needed to host PaaS or custom user platforms. Services offered in IaaS include hardware-level provisioning, public and private network connectivity, (redundant) load balancing, replication, data center space, and firewalls. IaaS relieves end users of operational and capital expenses. While the other two models also provide these features, here they are much more prominent, since IaaS is the closest model to actual hardware. Moreover, since the actual hardware is virtualized in climate-controlled data centers, IaaS can shield end users from eventual hardware failures, greatly increasing availability and eliminating repair and maintenance costs. A popular IaaS provider, Amazon Elastic Compute Cloud (EC2), offers 9 hardware instance families in 18 types [3]. Some of the other IaaS providers include GoGrid, Elastic Hosts, AppNexus, and Mosso [4].

1.1.2 ADVANCED POWER GRID

Online shopping is just one of many instances where cloud computing is making its mark on society. The power grid, in fact, is currently at an interesting crossroads in this technological space. One fundamental capability that engineers are striving to improve is the grid’s situational awareness—its real-time knowledge of grid state—through highly time-synchronized phasor measurement units (PMUs), accurate digital fault recorders (DFRs), advanced metering infrastructure (AMI), smart meters, and significantly better communication. The industry is also facing a massive influx of ubiquitous household devices that exchange information related to energy consumption. In light of these new technologies, the traditional power grid is being transformed into what is popularly known as the “smart grid” or the “advanced power grid.”

The evolution of the power grid brings its own share of challenges. The newly introduced data have the potential to dramatically increase accuracy, but only if processed quickly and correctly. True situational awareness and real-time control decisions go hand in hand. The feasibility of achieving these two objectives, however, heavily depends on three key features:

1. The ability to capture the power grid state accurately and synchronously

2. The ability to deliver grid state data reliably and in a timely manner over a (potentially) wide area

3. The ability to rapidly process large quantities of state data and redirect the resulting information to appropriate power application(s), and, to a lesser extent, the ability to rapidly acquire computing resources for on-demand data processing

Emerging power applications are the direct beneficiaries of rapid data capture, delivery, processing, and retrieval. One such example is the transition from conventional state estimation to direct state calculation. Beginning in the early 1960s, the power grid has been employing supervisory control and data acquisition (SCADA) technology for many of its real-time requisites, such as balancing load against supply, demand response, and contingency detection and analysis. SCADA uses a slow, cyclic polling architecture in which decisions are based on unsynchronized measurements that may be several seconds old. Consequently, the estimated state lags the actual state most. Thus, state estimation gives very limited insight and visibility into the grid’s actual operational status. In contrast, tightly time-synchronized PMU data streams deliver data under strict quality of service (QoS) guarantees—low latency and high availability—allowing control centers to perform direct state calculations and measurements. The capabilities that come with the availability of status data make creating a real-time picture of the grid’s operational state much more realistic [5].

There are also many myths surrounding the operations of a power grid in conjunction with big data and its efficient use. The following is a nonexhaustive list of some of these myths.

1. Timeliness: Real-time data is a relative term. Often the application requirements dictate the timeliness needs. Modern software and hardware technologies provide many workarounds on the timeliness of data availability on wide area networks with average bandwidths. One of them is selective packet dropping. This technique guarantees a minimum QoS while delivering information to recipients in a timely manner. Smart power grids will greatly benefit from these techniques.

2. Security and Safety: Security and safety are concerns often cited by decision makers when considering new technologies. While absolute security is impossible, most concerns arising from data security issues have been technically addressed. One large factor that affects security is human errors and oversights. Often, insufficient emphasis is given to this side of security. More and more emphasis is given to the communication channels. Securing an already secure channel only results in performance losses and overheads.

3. Cost: The cost of maintaining information infrastructures has become a major ...