![]()

Part 2

Hearing and Listening to Music

![]()

10

Musical Structure

Time and Rhythm

Peter Martens and Fernando Benadon

Introduction

Structure is more familiar to us in things we see than in things we hear. We build structures to inhabit; we observe structures in the natural world such as trees or coral reefs. Musical structure is often visible, too; we can see it in the physical movements that produce musical sounds, in the dance moves drawn from musical rhythms, and in the notation used to represent musical ideas. While any of these visible manifestations of musical structure could serve as a useful place to start our treatment of structured musical time, it is perhaps easiest to begin with the visible traces that are captured in standard music notation.

A Beginning: Hearing a Beat

Consider the snippet of music below, a simple string of quarter notes, continuing infinitely (Figure 10.1). No pitch, timbral, or tempo information is given, simply unspecified events occurring in a particular sequential relationship.

Though they may look identical on the page, our understanding of these quarter notes morphs as they proceed in time. Our minds are built to predict the future, to extrapolate probabilities for possible future events based on past experience, and we do this unconsciously with all manner of stimuli (Huron, 2006, p. 3). If we’ve seen three ants emerge from a hole in the ground, we expect more to follow; this is a “what” expectation, and so we would be surprised if an earwig emerged next. Likewise, if we’ve heard five evenly-spaced drips from a leaky faucet hit the sink bottom, we expect more drips to occur with the same time interval between them. This is both a “what” and “when” expectation; we would be surprised by a large delay before drip number six, but we would also be surprised by a finger snap at the exact time that that sixth drip should occur.

These basic habits have a biological foundation (see Henry & Grahn, this volume). Successfully predicting the timing and type of future events confers significant survival advantage to an organism, and the auditory system—operating as it does in full 360° surround—is one of (if not the) most important sensory inputs in terms of this advantage. We bring these habits and reflexive skills to music listening. Returning to Figure 10.1, after we hear only the first two quarter notes we expect a third event to follow the second at the same time interval that separated the second from the first (Longuet-Higgins & Lee, 1982). More precisely, we expect the beginning, or onset, of the third note to correspond to the time span established by the onsets of notes one and two. This span of time from onset to onset, or attack point to attack point, is often referred to as an interonset interval, or IOI. A single IOI is bounded by two events, and thus while two events make us expect a third event, just one IOI makes us expect future similar IOIs. In either way of referring to events in our environment, our minds grab hold of a periodicity: a sequence of temporally equidistant onsets.

Figure 10.1 Repeating quarter notes.

Continuing our thought experiment with Figure 10.1 a step further, if the expectation of a third in-time note (or second equivalent IOI) is met, we have an even stronger expectation of yet another note/IOI with the same spacing in time as the previous, and so on. As the periodic sequence unfolds and our expectations are further validated, our ability to predict the timing of upcoming events is reinforced. This basic ability allows us to synchronize physical movements with other humans (as when playing in a band) or with the sound waves emanating from speakers or headphones (as when moving to a beat). The terms beat induction and entrainment are commonly used for this phenomenon, which may occur at a cognitive or neural level without physical manifestation (Honing, 2013).

Beat, Hierarchy, and Meter

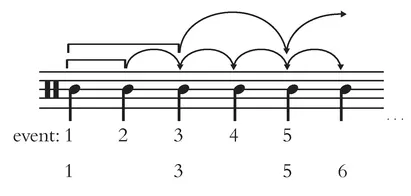

Our minds are not content to grasp this single periodicity, however. Once we hear events 1–3, a longer time interval becomes established between events 1 and 3 that leads us to expect event 5 further into the future, while at the same time expecting event 4 immediately next. Event 5 is now expected as a part of two hierarchically organized, or stacked periodicities: the original event string 1,2,3,4,5 and the slower moving event string 1,3,5. Being inextricably linked, these two simultaneous layers of events complement each other’s temporal expectations and increase our anticipation of event 5, as shown by the arrows in Figure 10.2.

It is important to note that we form these expectations unconsciously, and that by default we tend to nest layers by aligning each event in the faster layer with every other event in the slower layer, as in Figure 10.2 (Brochard, et al., 2003). Listening to a dripping faucet or undifferentiated metronome clicks, we naturally impose a grouping, often duple, on the acoustically identical events, even from infancy (Bergeson & Trehub, 2005).1 Any grouping of multiple events into larger time spans is a way to sharpen and heighten our precision as we predict when future events will occur, and these groupings also function to lower the cognitive load by “chunking” a larger number of events in the incoming information stream into fewer discrete units (cf. Zatorre, Chen, & Penhune, 2007).

This nesting of shorter time spans within larger time spans, as conveyed by the brackets and arrows in Figure 10.2, can be applied recursively to include more than just two layers, as long as each event in a slower periodicity is simultaneously a part of all faster periodicities. Thus, our expectations develop over longer time spans in an orderly fashion, for instance, expecting a future 9th quarter note as part of three layers: 1,2,3,4,5,6,7,8,9 (original), 1,3,5,7,9 (half as fast), and 1,5,9 (four times slower than the original). As we will see below, this nesting process will not accrue indefinitely, but in this way we invest some moments in the future with greater “expectational weight” than others. We expect the 9th quarter note more strongly than the 8th, for example, because that 9th quarter note would fulfill the expectations of all three levels of periodicity. The 8th quarter note—at least in this musically impoverished abstraction—only serves to continue the original note-to-note periodicity.

Figure 10.2 Projection and accrual of temporal expectations.

The emergent pattern of expectational peaks, foothills, and valleys brought about by layers of nested expectations gives rise to what we experience as meter in music (Jones, 1992), and is encoded in music notation via a number of symbolic systems such as time signatures and note durations. When we speak of stronger or weaker beats in music, then, we are projecting the products of our own psychological processing onto a musical sound-scape. It is often fairly easy to recognize these patterns when analyzing music, whether notated or not, and so we can speak of a piece’s metric hierarchy when referring to the typical strong-weak patterns of common meters. In 4/4 time, for example, we generally experience beat 1 as the “strongest” in each measure, followed by beat 3, and then beats 2 and 4 about equally.

Beat and Tempo

There are few musical terms used more commonly, yet less precisely, than beat and tempo. We have just used “beat” in the discussion above to refer to each of the four beats in a measure of 4/4 time. This usage implies that the beat in a measure of 4/4 corresponds to the notated quarter note, which is generally true. In terms of cognition, however, we define a beat without recourse to a notated meter, as a periodicity in music onto which we latch perceptually. But already we have two uses of the same term; a beat can refer to a single layer of motion in music (e.g. “this piece has a strong quarter-note beat”), while at the same time we use beat to label each occurrence within that string of events (e.g. “the trumpet enters on beat 4”). To use the term in either sense presupposes a process called beat-finding, whereby the listener infers a consistently articulated periodicity from the music, even if not all beats correspond to sounded events. Evidence for beat-finding can be seen in physical movements such as foot tapping or head nodding in synchrony with the music, and appears to be an almost exclusively human trait (cf. Patel et al., 2009).

Tempo is another term ubiquitous in music that is used in inconsistent and poorly defined ways. Let’s return to Figure 10.1 for a moment. If that rhythm’s speed is not too fast or too slow, each quarter note will be perceived as a beat, and that beat will establish the tempo of the music. We often think of tempo as corresponding to a single periodicity, typically expressed by musicians as beats per minute (BPM). However, as we saw earlier, the basic periodicity of our example gives rise to other nested (slower) periodicities. Further, real music is rarely as simple as a bare string of quarter notes. These two points lead to a key question. Of the several periodicities we can perceive as a beat even in a simple string of identical events, which of them would we define as determining a piece’s tempo? The simplest notion of beat and tempo revolves around the idea of an optimal range for tempo, centered on 100 BPM (Parncutt, 1994) or 120 BPM (Van Noorden & Moelants, 1999). While this turns out to be a complicated issue in fully musical contexts, we can make some general observations about the main beat in the well-known melody shown in Figure 10.3. If we were to hear that melody performed at = 120 BPM, most listeners would latch onto the quarter note as their beat, and would likely describe their experience of tempo in the piece as moderate. If the same melody were performed at = 200 BPM, however, many listeners would instead latch onto the half note at 100 BPM as their beat rate. This brings us to a curious paradox: While our perceived beat would be objectively slower in the second hearing, our judgment of tempo would likely be faster—bouncy or lively (or maybe even too fast!) instead of moderate.

Most commonly, tempo is used by composers, performers, DJs, etc. to refer to the BPM rate of the beat level, often designated as a metronome marking (e.g. mm = 120). Such markings are one way to convey compositional intent in a score or piece of sheet music, or to compare one piece to another as in a BPM table when constructing a set of electronic dance music. Yet BPM rates do not necessarily correspond to a sensation of faster or slower, and this mismatch is the subject of several in-progress research projects. Judgments of tempo are often based on a more general sense of event density (e.g. Bisesi & Vicario, 2015)—how “busy” the musical texture is—and are often encoded by subjective verbal descriptors such as “lively” or “moderate,” or by traditional Italian terms such as “largo” or “allegro con brio.” But even the composer Beethoven found the imprecision of these terms problematic, leading him to champion the objectivity of BPM rates and the newly invented metronome in the early nineteenth century (Grant, 2014, pp. 254ff.).

Foraging for Meter

Figure 10.3 F. J. Haydn, “Austrian Hymn.”

Our minds and bodies are constantly searching our environments for periodic information, and when it exists we attempt to synchronize with these periodicities neurally and physiologically (Merchant et al., 2015). Our initial phase of synchronization with complex periodic stimuli such as music begins at an initial beat or tactus. London (2012, pp. 30–33) cites several hundred years of historical acknowledgement of the concept of tactus, and Honing (2012) argues that at least beat-finding (if not meter-finding) is innate, a basic and constant cognitive operation when listening to music.

While we might assume that the tactus in Figure 10.1 is obvious—it’s a string of quarter notes, after all—beat-finding is constrained and directed by several salience criteria that serve to mark some musical attacks for attention over others. All such c...