It is becoming apparent that future requirements for computing speed, system reliability, and cost effectiveness will entail the development of alternative computers to replace the traditional Von Neumann organization. As computing networks come into being, a new dream is now possible –distributed computing. Distributed computing brings transparent access to as much computer power and data as the user needs to accomplish any given task, and at the same time, achieves high performance and reliability objectives. Interest in distributed computing systems has grown rapidly in the last decade. The subject of distributed computing is diverse and many researchers are investigating various issues concerning the structure of distributed hardware and the design of distributed software so that potential parallelism and fault tolerance can be exploited. We consider in this chapter some basic concepts and issues related to distributed computing and provide a list of the topics covered in this book.

1.1 Motivation

The development of computer technology can be characterized by different approaches to the way computers were used. In the 1950s, computers were serial processors, running one job at a time to completion. These processors were run from a console by an operator and were not accessible to ordinary users. In the 1960s, jobs with similar needs were batched together and run through the computer as a group to reduce the computer idle time. Other techniques were also introduced such as off-line processing by using buffering, spooling and multiprogramming. The 1970s saw the introduction of time-sharing, both as a means of improving utilization, and as a method to bring the user closer to the computer. Time-sharing was the first step toward distributed systems: users could share resources and access them at different locations. The 1980s were the decade of personal computing: people had their own dedicated machine. The 1990s are the decade of distributed systems due to the excellent price/performance ratio offered by microprocessor-based systems and the steady improvements in networking technologies.

Distributed systems can take different physical formations: a group of personal computers (PCs) connected through a communication network, a set of workstations with not only shared file and database systems but also shared CPU cycles (still in most cases a local process has a higher priority than a remote process, where a process is a program in running), a processor pool where terminals are not attached to any processor and all resources are truly shared without distinguishing local and remote processes.

Distributed systems axe seamless-, that is, the interfaces among functional units on the network are for the most part invisible to the user, and the idea of distributed computing has been applied to database systems [16], [38], [49], file systems [4], [24], [33], [43], [54], operating systems [2], [39], [46], and general environments [19], [32], [35].

Another way of expressing the same idea is to say that the user views the system as a virtual uniprocessor not as a collection of distinct processors. The main motivations in moving to a distributed system are the following:

Inherently distributed applications. Distributed systems have come into existence in some very natural ways, e.g., in our society people are distributed and information should also be distributed. Distributed database system information is generated at different branch offices (sub-databases), so that a local access can be done quickly. The system also provides a global view to support various global operations.

Performance/cost. The parallelism of distributed systems reduces processing bottlenecks and provides improved all-around performance, i.e., distributed systems offer a better price/performance ratio.

Resource sharing. Distributed systems can efficiently support information and resource (hardware and software) sharing for users at different locations.

Flexibility and extensibility. Distributed systems axe capable of incxe-mental growth and have the added advantage of facilitating modification or extension of a system to adapt to a changing environment without disrupting its operations.

Availability and fault tolerance. With the multiplicity of storage units and processing elements, distributed systems have the potential ability to continue operation in the presence of failures in the system.

Scalability. Distributed systems can be easily scaled to include additional resources (both hardware and software).

LeLann [23] discussed aims and objectives of distributed systems and noted some of its distinctive characteristics by distinguishing between the physical and the logical distribution. Extensibility, increased availability and better resource sharing are considered the most important objectives.

There are two main stimuli for the current interest in distributed systems [5], [22]: technological change and user needs. Technological changes are in two areas: advancements in micro-electronic technology generated fast and inexpensive processors and advancements in communication technology resulted in the availability of highly efficient computer networks.

Long haul, relatively slow communication paths between computers have existed for a long time, but only recently has the technology for fast, inexpensive and reliable local area networks (LANs) emerged. These LANs typically run at 10-100 mbps (megabits per second). In response, the metropolitan area networks (MANs) and wide area networks (WANs) are becoming faster and more reliable. Normally, LANs span areas with diameters not more than a few kilometers, MANs cover areas with diameters up to a few dozen kilometers, and WANs extend with worldwide extent. Recently the asynchronous transfer mode (ATM) has been considered as the emerging technology for the future and it can provide data transmission rates up to 1.2 gbps (gigabits per second) for both LANs and WANs.

Among user needs, many enterprises are cooperative in nature, e.g., offices, multinational companies, university computing centers, etc., that require sharing of resources and information.

1.2 Basic Computer Organizations

The Von Neumann organization of a computer consists of CPU, memory unit, and I/O. The CPU is the brain of the computer. It executes programs stored in memory by fetching instructions, examining them, and then executing them one after another. The memory unit stores instructions (a sequence of instructions is called a program) and data. I/O gets instructions and data into and out of processors.

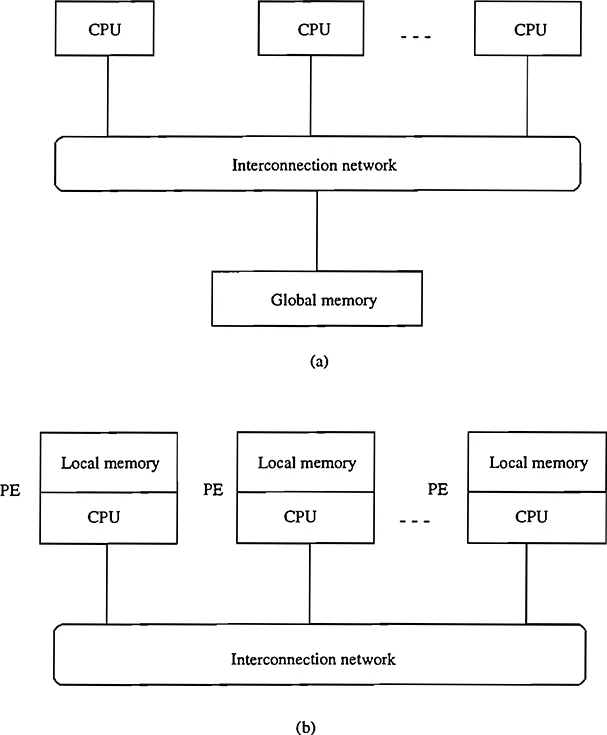

FIGURE 1.1

Two basic computer structures: (a) physically shared memory structure and (b) physically distributed memory structure.

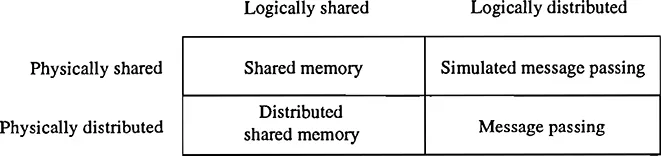

FIGURE 1.2

Physically versus logically shared/distributed memory.

If CPU and memory unit can be replicated, two basic computer organizations are:

Physically shared memory structure (Figure 1.1 (a)) has a single memory address space shared by all the CPUs. Such a system is also called a tightly coupled system. In a physically shared memory system, communication between CPUs takes place through the shared memory using read and write operations.

Physically distributed memory structure (Figure 1.1 (b)) does not have shared memory and each CPU has its attached local memory. The CPU and local memory pair is called processing element (PE) or simply processor. Such a system is sometimes referred to as a loosely coupled system. In a physically distributed memory system, communications between the processors are done by passing messages across the interconnection network through a send command at the sending processor and a receive command at the receiving processor.

In Figure 1.1, the interconnection network is used to connect different units of the system and to support movements of data and instructions. The I/O unit is not shown, but it is by no means less important.

However, the choice of a communication model does not have to be tied to the physical system. A distinction can be made between the physical sharing presented by the hardware (Figure 1.1) and the logical sharing presented by the programming model. Figure 1.2 shows four possible combinations of sharing.

The box in Figure 1.2 labeled shared mem...