SHANNON AND WEAVER’S MODEL (1949; WEAVER, 1949b)

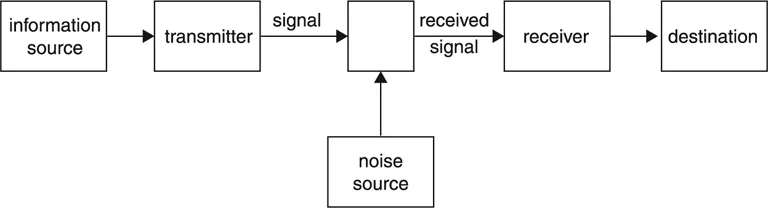

Their basic model of communication presents it as a simple linear process. Its simplicity has attracted many derivatives, and its linear, process-centred nature has attracted many critics. But we must look at the model (figure 2) before we consider its implications and before we attempt to evaluate it. The model is broadly understandable at first glance. Its obvious characteristics of simplicity and linearity stand out clearly. We will return to the named elements in the process later.

Shannon and Weaver identify three levels of problems in the study of communication. These are:

| Level A | How accurately can the symbols of communication be transmitted? |

| | (technical problems) |

| Level B | How precisely do the transmitted symbols convey the desired meaning? |

| | (semantic problems) |

| Level C | How effectively does the received meaning affect conduct in the desired way? |

| | (effectiveness problems) |

The technical problems of level A are the simplest to understand and these are the ones that the model was originally developed to explain.

The semantic problems are again easy to identify, but much harder to solve, and range from the meaning of words to the meaning that a US newsreel picture might have for a Russian. Shannon and Weaver consider that the meaning is contained in the message: thus improving the encoding will increase the semantic accuracy. But there are also cultural factors at work here which the model does not specify: the meaning is at least as much in the culture as in the message.

The effectiveness problems may at first sight seem to imply that Shannon and Weaver see communication as manipulation or propaganda: that A has communicated effectively with B when B responds in the way A desires. They do lay themselves open to this criticism, and hardly deflect it by claiming that the aesthetic or emotional response to a work of art is an effect of communication.

They claim that the three levels are not watertight, but are interrelated, and interdependent, and that their model, despite its origin in level A, works equally well on all three levels. The point of studying communication at each and all of these levels is to understand how we may improve the accuracy and efficiency of the process.

But let us return to our model. The source is seen as the decision maker; that is, the source decides which message to send, or rather selects one out of a set of possible messages. This selected message is then changed by the transmitter into a signal which is sent through the channel to the receiver. For a telephone the channel is a wire, the signal an electrical current in it, and the transmitter and receiver are the telephone handsets. In conversation, my mouth is the transmitter, the signal is the sound waves which pass through the channel of the air (I could not talk to you in a vacuum), and your ear is the receiver.

Obviously, some parts of the model can operate more than once. In the telephone message for instance, my mouth transmits a signal to the handset which is at this moment a receiver, and which instantly becomes a transmitter to send the signal to your handset, which receives it and then transmits it via the air to your ear. Gerbner’s model, as we will see later, deals more satisfactorily with this doubling up of certain stages of the process.

Noise

The one term in the model whose meaning is not readily apparent is noise. Noise is anything that is added to the signal between its transmission and reception that is not intended by the source. This can be distortion of sound or crackling in a telephone wire, static in a radio signal, or “snow” on a television screen. These are all examples of noise occurring within the channel and this sort of noise, on level A, is Shannon and Weaver’s main concern. But the concept of noise has been extended to mean any signal received that was not transmitted by the source, or anything that makes the intended signal harder to decode accurately. Thus an uncomfortable chair during a lecture can be a source of noise—we do not receive messages through our eyes and ears only. Thoughts that are more interesting than the lecturer’s words are also noise.

Shannon and Weaver admit that the level-A concept of noise needs extending to cope with level-B problems. They distinguish between semantic noise (level B) and engineering noise (level A) and suggest that a box labelled “semantic receiver” may need inserting between the engineering receiver and the destination. Semantic noise is defined as any distortion of meaning occurring in the communication process which is not intended by the source but which affects the reception of the message at its destination.

Noise, whether it originates in the channel, the audience, the sender, or the message itself, always confuses the intention of the sender and thus limits the amount of desired information that can be sent in a given situation in a given time. Overcoming the problems caused by noise led Shannon and Weaver into some further fundamental concepts.

Information: Basic Concept

Despite their claims to operate on levels A, B, and C, Shannon and Weaver do, in fact, concentrate their work on level A. On this level, their term information is used in a specialist, technical sense, and to understand it we must erase from our minds its usual everyday meaning.

Information on level A is a measure of the predictability of the signal, that is the number of choices open to its sender. It has nothing to do with its content. A signal, we remember, is the physical form of a message—sound waves in the air, light waves, electrical impulses, touchings, or whatever. So, I may have a code that consists of two signals—a single flash of a light bulb, or a double flash. The information contained by either of these signals is identical—50 per cent predictability. This is regardless of what they actually mean—one flash could mean “Yes”, two flashes “No”, or one flash could mean the whole of the Old Testament, and two flashes the New. In this case “Yes” contains the same amount of information as the “Old Testament”. The information contained by the letter “u” when it follows the letter “q” in English is nil because it is totally predictable.

Information: Further Implications

We can use the unit “bit” to measure information. The word “bit” is a compression of “binary digit” and means, in practice, a Yes/No choice. These binary choices, or binary oppositions, are the basis of computer language, and many psychologists claim that they are the way in which our brain operates too. For instance, if we wish to assess someone’s age we go through a rapid series of binary choices: are they old or are they young; if young, are they adult or pre-adult; if pre-adult, are they teenager or pre-teenager; if preteenager, are they school-age or pre-school; if pre-school, are they toddler or baby? The answer is baby. Here, in this system of binary choices the word “baby” contains five bits of information because we have made five choices along the way. Here, of course, we have slipped easily on to level B, because these are semantic categories, or categories of meaning, not simply of signal. “Information” at this level is much closer to our normal use of the term. So if we say someone is young we give one bit of information only, that he is not old. If we say he is a baby we give five bits of information if, and it is a big if, we use the classifying system detailed above.

This is the trouble with the concept of “information” on level B. The semantic systems are not so precisely defined as are the signal systems of level A, and thus the numerical measuring of information is harder, and some would say irrelevant. There is no doubt that a letter (i.e. part of the signal system of level A) contains five bits of information. (Ask if it is in the first or second half of the alphabet, then in the first or second half of the half you have chosen, and so on. Five questions, or binary choices, will enable you to identify any letter in the alphabet.) But there is considerable doubt about the possibility of measuring meaning in the same sort of way.

Obviously, Shannon and Weaver’s engineering and mathematical background shows in their emphasis. In the design of a telephone system, the critical factor is the number of signals it can carry. What people actually say is irrelevant. The question for us, however, is how useful a theory with this sort of mechanistic base can be in the broader study of communication. Despite the doubts about the value of measuring meaning and information numerically, relating the amount of information to the number of choices available is insightful, and is broadly similar to insights into the nature of language provided by linguistics and semiotics, as we will see later in this book. Notions of predictability and choice are vital in understanding communication.