eBook - ePub

Complex Information Processing

The Impact of Herbert A. Simon

- 480 pages

- English

- ePUB (mobile friendly)

- Available on iOS & Android

eBook - ePub

About this book

Here, several leading experts in the area of cognitive science summarize their current research programs, tracing Herbert A. Simon's influence on their own work -- and on the field of information processing at large. Topics covered include problem- solving, imagery, reading, writing, memory, expertise, instruction, and learning. Collectively, the chapters reveal a high degree of coherence across the various specialized disciplines within cognition -- a coherence largely attributable to the initial unity in Simon's seminal and pioneering contributions.

Trusted by 375,005 students

Access to over 1 million titles for a fair monthly price.

Study more efficiently using our study tools.

Information

I

REPRESENTATION AND CONSTRAINT IN COMPLEX PROCESSING

1

The Lateralization of BRIAN: A Computational Theory and Model of Visual Hemispheric Specialization

Stephen M. Kosslyn | Michael A. Sokolov |

Jack C. Chen | |

It is to physiology that we must turn for an explanation of the limits of adaptation. (Simon, 1981)

At first glance, a chapter on cerebral lateralization may seem out of place in this book. After all, this book is a tribute to Herbert Simon, who is not best known for his interest in neuropsychology. However, this apparent incongruity illustrates why it is entirely appropriate to have this chapter in this volume: Perhaps the most remarkable aspect of Professor Simon's career has been the very far-reaching impact of his ideas. This chapter is a good illustration of how far some of those ideas have spread beyond his original interests. Powerful ideas have a tendency to do that, and the idea of thought as computation, the concept of hierarchical and nearly decomposable systems, the emphasis on the importance of the requirements of the task in shaping cognitive processing, and so on, are very powerful ideas. We hope to demonstrate not only the applicability of these ideas to neuropsychology, but the added deductive and inferential power they confer.

INTRODUCTION

It has been known at least since the middle of the nineteenth century that the two halves of the brain are not equally important in all tasks (e.g., see Jackson, 1932/1874). There is no question that the left cerebral hemisphere typically has a special role in language production and comprehension (e.g., see Milner & Rasmussen, 1966). Similarly, there is good evidence that the right cerebral hemisphere typically has a special role in perception (see De Renzi, 1982). However, these broad generalizations almost exhaust the consensus in the field. More precise and detailed characterizations of hemispheric specialization have eluded theorists (e.g., see Bradshaw & Nettleton, 1981; Bryden, 1982; Springer & Deutsch, 1981).

Part of the reason that theory has not progressed rapidly in neuropsychology is that experiments often do not replicate (e.g., for a typical review see White, 1969). Indeed, the failure to replicate is so pervasive that it seems unlikely that it is due merely to sloppy experimentation (cf. Hardyck, 1983). At least some of the difficulty may lie in differences among the subjects who were tested. If there are widespread individual differences, then the fact that experiments typically have small numbers of subjects would contribute to the variability in the reported results (see De Renzi, 1982). This chapter explores a computational theory of individual differences in the hemispheric specialization of visual processing. Very straightforward computational ideas allow us to understand how the two hemispheres can be specialized in a wide variety of ways.

The first part of this chapter is an overview of a theory of the processing subsystems of high-level vision. Following Simon's lead, we believe that computational theories of function are as necessary in neuropsychology as in cognitive psychology proper. If one does not have a reasonably clear idea of what is being done, it is rather difficult to discuss how it is done differently in the two hemispheres. The second part of this chapter is an overview of a simple mechanism that will produce lateralization of the system outlined in the first part. This mechanism accounts for how experience can produce individual differences in lateralization, depending on a person's innate predispositions for processing; this mechanism has four free parameters, the values of which can presumably differ from individual to individual. The third part of the chapter describes a computer simulation of the lateralization mechanism operating on the visual subsystems. This simulation is called BRIAN, short for Bilateral Recognition and Imagery Adaptive Networks (for a detailed description, see Kosslyn, Flynn, Amsterdam, & Wang, in press). We summarize here the results of computer simulations that explore the impact of varied individual differences parameter values on lateralization. These results illustrate how different qualitative phenomena can arise from underlying quantitative variation. Finally, we conclude with an examination of the virtues and drawbacks of the present approach.

HIGH-LEVEL VISION

The processes that underlie object recognition can be divided into two sorts, low-level (or early) and high-level (or late). Low-level visual processes are concerned with segregating figure from ground on the basis of properties of the sensory input; these processes detect edges, grow regions of homogeneous values, derive structure from motion, derive depth from disparities in the images striking the two eyes, and so on (see chap. 3 of Marr, 1982). High-level visual processes involve the access or use of previously stored information. As such, high-level processes are used in recognition, where input is entered into memory and compared with previously stored representations; high-level processes are also used in navigation and mental imagery, but we shall not dwell on these topics here (see Kosslyn, 1987).

In this section we briefly summarize a theory of some of the processing subsystems used in high-level vision. Each subsystem is dedicated to carrying out one part of the information processing necessary to perform object recognition (cf. chap. 7 of Simon, 1981). Space limitations prevent our providing the full rationale for this particular decomposition; Kosslyn et al. (in press) develop detailed justification for this decomposition and also provide additional discussion of various issues raised by the theory and approach. As discussed in Kosslyn et al., in developing the theory we began by developing a taxonomy of the fundamental functional characteristics of the human recognition system, and then tried to ensure that the theory and model are not in principle incapable of producing these behaviors. Furthermore, we tried to ensure that the theory is consistent with the structure of the machine whose function we are describing, namely the brain. That is, we used brain anatomy and physiology as motivation for the theory (see Kosslyn, 1987; Kosslyn et al., in press for additional details; see also Feldman, 1985, for a similar project predating this one). In any event, the thrust of this chapter is not to develop or defend the theory of subsystems. Rather, we introduce the subsystems here only to allow us later to consider how they are affected by the lateralization mechanism.

SUBSYSTEMS OF HIGH-LEVEL VISUAL RECOGNITION

The theory of subsystems is cast at a relatively coarse level of analysis; it specifies the nature of the constituent processing units used in high-level object recognition. We delineate subsystems by describing what they compute; we do not attempt to characterize how the subsystems actually carry out these computations (cf. Marr, 1982). The subsystems we formulate are not offered as the ultimate primitive processing units. Rather, we hypothesize that they delineate correct boundaries of separate subsystems at a macro level of analysis (cf. Simon, 1981). We have adopted a strong “hierarchical decomposition” constraint: Further subdivision must respect the boundaries posited at this coarser level; we cannot later posit more fine-grained subsystems that cut across the boundaries of those we hypothesize here.1

Two Major Systems: What versus Where

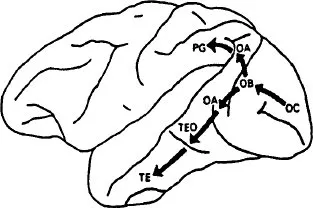

One of the most remarkable functional properties of the visual system is its ability to recognize objects from different vantage points. It now appears that we know important aspects of the neural mechanism that underlies our ability to recognize objects when they appear in different positions in the visual field (so that the image falls on different parts of the retina). The neurons that apparently represent object properties (i.e., shape, color, texture) have very large receptive fields, responding when the stimulus falls in a wide range of positions. That is, the shape recognition system seems to ignore the location of objects (within a large range), which allows it to generalize over a range of positions (see Gross & Mishkin, 1977). This solution to the problem of recognizing objects in different positions requires a second system to represent location, given that we do in fact know where an object is. And there is now good evidence that information about “what” and “where” is processed separately in the high-level vision system. For example, Ungerleider and Mishkin (1982) summarized much evidence for the existence of “two cortical visual systems.” Figure 1.1 illustrates the ventral system, which apparently analyzes object properties, and the dorsal system, which apparently analyzes location (for a brief summary of data supporting this distinction, see Kosslyn, 1987).

Input to High-level Recognition Subsystems

The input to the shape and location systems is the output from the low-level vision system. This output is represented as patterns of activation in a series of retinotopic maps (see Cowey, 1985; Feldman, 1984; Van Essen, 1985; Van Essen & Maunsell, 1983). These maps preserve (roughly) the local geometry of the planar projection of the visible surface of an object (but with greater area typically being allocated to the foveal regions). We conceive of the circumstriate maps as a single functional structure, which we call the visual buffer.

Attention Window

There is much more information in the visual buffer than can be processed at once; thus, one must select only some information for further processing. We posit an attention window that selects a region of space (within the visual buffer) for further processing; the location and scope of the window apparently can be varied (cf. Cave & Kosslyn, in press; Larsen & Bundesen, 1978; Treisman & Gelade, 1980). The contents of the window are sent to the ventral system for shape recognition, and to the dorsal system for location representation. This claim is consistent with results reported by Moran and Desimone (1985), who found that cells in the ventral system (in areas V4 and IT) were inhibited when the trigger stimulus fell outside the region being attended to, although it still fell within the cell's receptive field.

FIG. 1.1. The dorsal and ventral systems (modified from Mishkin, Ungerleider, & Macko, 1983). The inferior temporal lobe corresponds roughly to areas TE and TEO illustrated here. Reprinted by permission of author.

Subsystems of the Ventral System

Kosslyn et al. (in press) argue that the ventral system can be decomposed into three types of subsystems, each of which is dedicated to performing a specific type of computation.

Preprocessing

Objects must be recognized when they subtend different visual angles and are seen from different points of view. Lowe (1987a, 1987b) pointed out that some aspects of a stimulus (provided they are visible) remain invariant under translation, scale changes, and rotations. For example, parallel lines (representing parallel edges) remain roughly parallel, points at which lines meet are similarly preserved, and so on. The preprocessor highlights such invariant properties of the input and sends them to the pattern activation subsystem, where they serve as cues to access stored representations of shape.

Pattern Activation

The information from the preprocessing subsystem is used to access stored representations of parts and global shapes of objects. A range of inputs will access the same representation, which allows the system to ignore irrelevant shape variations, such as those that occur when objects come in a variety of sizes and shapes (e.g., books, feet, or dinner forks).2

The cues from the preprocessing subsystem often may be sufficient to activate a single shape representation, particularly if the object subtends a relatively small visual angle and is rigid (and hence does not vary from instance to instance).3 However, such encodings may be insufficient if (a) the object subtends a relatively large visual angle, requiring multiple eye fixations to encode, or (b) the object is flexible, and hence its shape may not correspond to one stored in the pattern activation subsystem (e.g., a sleeping dog curled up oddly). In these cases, a more elaborate encoding process will be necessary, as will be discussed below.

Feature Detection

Shape is not the only important property of objects, and some visual tasks do not involve encoding shapes into memory. Thus, there must be mechanisms that encode other properties of the input, such as color and texture. It is well known that brain damage can selectively disrupt color encoding without disrupting shape encoding per se; indeed, shape-color associations can be disrupted separately from color or shape encoding (e.g., see Benton, 1985).

Subsystems in the Dorsal System

While an object's properties are being processed in the ventral system, its location is processed in the dorsal system. According to the Kosslyn et al. (in press) theory, this system has three major components.

Spatiotopic Mapping

Locations in the visual buffer are specified relative to the retina, not space; such coordinates are useless for representing the locations of objects in space or the relative positions of parts of an object. This subsystem uses retinotopic position, eye position, head position, and body position to compute where an object or part is located in space. Andersen, Essick, and Siegel (1985) found cells in the inferior parietal lobule (part of the dorsal system) that respond selectively to location in space, gated by eye position; this finding is consistent with the inference that this structure is involved in computing spatiotopic coo...

Table of contents

- Front Cover

- Half Title

- Title Page

- Copyright

- Dedication

- Contents

- List of Contributors

- Introduction

- PART I REPRESENTATION AND CONSTRAINT IN COMPLEX PROCESSING

- PART II EXPERTISE IN HUMAN AND ARTIFICIAL SYSTEMS

- PART III INSTRUCTION AND SKILL ACQUISITION

- PART IV SCIENCE AND THOUGHT

- EPILOGUE

- How It All Got Put Together

- Author Index

- Subject Index

Frequently asked questions

Yes, you can cancel anytime from the Subscription tab in your account settings on the Perlego website. Your subscription will stay active until the end of your current billing period. Learn how to cancel your subscription

No, books cannot be downloaded as external files, such as PDFs, for use outside of Perlego. However, you can download books within the Perlego app for offline reading on mobile or tablet. Learn how to download books offline

Perlego offers two plans: Essential and Complete

- Essential is ideal for learners and professionals who enjoy exploring a wide range of subjects. Access the Essential Library with 800,000+ trusted titles and best-sellers across business, personal growth, and the humanities. Includes unlimited reading time and Standard Read Aloud voice.

- Complete: Perfect for advanced learners and researchers needing full, unrestricted access. Unlock 1.4M+ books across hundreds of subjects, including academic and specialized titles. The Complete Plan also includes advanced features like Premium Read Aloud and Research Assistant.

We are an online textbook subscription service, where you can get access to an entire online library for less than the price of a single book per month. With over 1 million books across 990+ topics, we’ve got you covered! Learn about our mission

Look out for the read-aloud symbol on your next book to see if you can listen to it. The read-aloud tool reads text aloud for you, highlighting the text as it is being read. You can pause it, speed it up and slow it down. Learn more about Read Aloud

Yes! You can use the Perlego app on both iOS and Android devices to read anytime, anywhere — even offline. Perfect for commutes or when you’re on the go.

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Yes, you can access Complex Information Processing by David Klahr,Kenneth Kotovsky in PDF and/or ePUB format, as well as other popular books in Psychology & Cognitive Psychology & Cognition. We have over one million books available in our catalogue for you to explore.