- 146 pages

- English

- ePUB (mobile friendly)

- Available on iOS & Android

eBook - ePub

About this book

User Interface Inspection Methods succinctly covers five inspection methods: heuristic evaluation, perspective-based user interface inspection, cognitive walkthrough, pluralistic walkthrough, and formal usability inspections.

Heuristic evaluation is perhaps the best-known inspection method, requiring a group of evaluators to review a product against a set of general principles. The perspective-based user interface inspection is based on the principle that different perspectives will find different problems in a user interface. In the related persona-based inspection, colleagues assume the roles of personas and review the product based on the needs, background, tasks, and pain points of the different personas. The cognitive walkthrough focuses on ease of learning.

Most of the inspection methods do not require users; the main exception is the pluralistic walkthrough, in which a user is invited to provide feedback while members of a product team listen, observe the user, and ask questions.

After reading this book, you will be able to use these UI inspection methods with confidence and certainty.

Trusted by 375,005 students

Access to over 1 million titles for a fair monthly price.

Study more efficiently using our study tools.

Information

Topic

DesignSubtopic

Interazione tra uomo e computerChapter 1

Heuristic Evaluation

Heuristic evaluation is a usability inspection method that asks usability practitioners and other stakeholders to evaluate a user interface based on a set of principles or commonsense rules. This method was originally conceived as a discount usability method that could be used to find problems early using wireframes, prototypes, and working products. A side benefit of the method is that evaluators learn about the principles that support good usability. Heuristic evaluation and related inspection methods have become one of the most common methods for finding usability problems.

Keywords

Heuristic; heuristic evaluation; inspection; usability inspection; walkthrough

Outline

Overview of Heuristic Evaluation

When Should You Use Heuristic Evaluation?

Strengths

Weaknesses

What Resources Do You Need to Conduct a Heuristic Evaluation?

Personnel, Participants, and Training

Hardware and Software

Documents and Materials

Procedures and Practical Advice on the Heuristic Evaluation Method

Planning a Heuristic Evaluation Session

Conducting a Heuristic Evaluation

After the Heuristic Evaluations by Individuals

Variations and Extensions of the Heuristic Evaluation Method

The Group Heuristic Evaluation with Minimal Preparation

Crowdsourced Heuristic Evaluation

Participatory Heuristic Evaluation

Cooperative Evaluation

Heuristic Walkthrough

HE-Plus Method

Major Issues in the Use of the Heuristic Evaluation Method

How Does the UX Team Generate Heuristics When the Basic Set Is Not Sufficient?

Do Heuristic Evaluations Find “Real” Problems?

Does Heuristic Evaluation Lead to Better Products?

How Much Does Expertise Matter?

Should Inspections and Walkthroughs Highlight Positive Aspects of a Product’s UI?

Individual Reliability and Group Thoroughness

Data, Analysis, and Reporting

Conclusions

Alternate Names: Expert review, heuristic inspection, usability inspection, peer review, user interface inspection.

Related Methods: Cognitive walkthrough, expert review, formal usability inspection, perspective-based user interface inspection, pluralistic walkthrough.

Overview of Heuristic Evaluation

A heuristic evaluation is a type of user interface (UI) or usability inspection where an individual, or a team of individuals, evaluates a specification, prototype, or product against a brief list of succinct usability or user experience (UX) principles or areas of concern (Nielsen, 1993; Nielsen & Molich, 1990). The heuristic evaluation method is one of the most common methods in user-centered design (UCD) for identifying usability problems (Rosenbaum, Rohn, & Humburg, 2000), although in some cases, what people refer to as a heuristic evaluation might be better categorized as an expert review (Chapter 2) because heuristics were mixed with additional principles and personal beliefs and knowledge about usability.

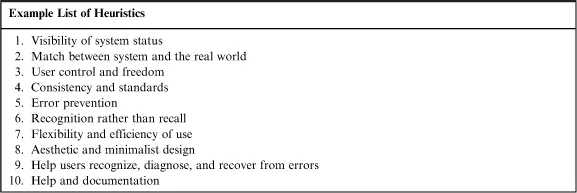

A heuristic is a commonsense rule or a simplified principle. A list of heuristics is meant as an aid or mnemonic device for the evaluators. Table 1.1 is a list of heuristics from Nielsen (1994a) that you might give to your team of evaluators to remind them about potential problem areas.

Table 1.1

A Set of Heuristics from Nielsen (1994a)

There are several general approaches for conducting a heuristic evaluation:

• Object-based. In an object-based heuristic evaluation, evaluators are asked to examine particular UI objects for problems related to the heuristics. These objects can include mobile screens, hardware control panels, web pages, windows, dialog boxes, menus, controls (e.g., radio buttons, push buttons, and text fields), error messages, and keyboard assignments.

• Task-based. In the task-based approach, evaluators are given heuristics and a set of tasks to work through and are asked to report on problems related to heuristics that occur as they perform or simulate the tasks.

• An object–task hybrid. A hybrid approach combines the object and task approaches. Evaluators first work through a set of tasks looking for issues related to heuristics and then evaluate designated UI objects against the same heuristics. The hybrid approach is similar to the heuristic walkthrough (Sears, 1997), which is described later in this book.

In task-based or hybrid approaches, the choice of tasks for the team of evaluators is critical. Questions to consider when choosing tasks include the following:

• Is the task realistic? Simplistic tasks might not reveal serious problems.

• What is the frequency of the task? The frequency of the task might determine whether something is a problem or not. Consider a complex program that you use once a year (e.g., US tax programs). A program intended to be used once a year might require high initial learning support, much feedback, and repeated success messages—all features intended to support the infrequent user. These same features might be considered problems for the daily user of the same program (e.g., a tax accountant or financial advisor) who was interested in efficiency and doesn’t want constant, irritating feedback messages.

• What are the consequences of the task? Will an error during a task result in a minor or major loss of data? Will someone die if there is task failure? If you are working on medical monitoring systems, the consequences of missed problems could be disastrous.

• Are the data used in the task realistic? We often use simple samples of data for usability evaluations because it is convenient, but you might reveal more problems with “dirty data.”

• Are you using data at the right scale? Some tasks are easy with limited data sets (e.g., 100 or 1000 items) but very hard when tens of thousands or millions of items are involved. It is convenient to use small samples for task-based evaluations, but those small samples of test data may hide significant problems.

Multiple evaluators are recommended for heuristic evaluations, because different people who evaluate the same UI often identify quite different problems (Hertzum, Jacobsen, & Molich, 2002; Molich & Dumas, 2008; Nielsen, 1993) and also vary considerably in their ratings of the severity of identical problems (Molich, 2011).

During the heuristic evaluation, evaluators can write down problems as they work independently, or they can think aloud while a colleague takes notes about the problems encountered during the evaluation. The results of all the evaluations can then be aggregated into a composite list of usability problems o...

Table of contents

- Cover image

- Title page

- Table of Contents

- Copyright

- Inspections and Walkthroughs

- Chapter 1. Heuristic Evaluation

- Chapter 2. The Individual Expert Review

- Chapter 3. Perspective-Based UI Inspection

- Chapter 4. Cognitive Walkthrough

- Chapter 5. Pluralistic Usability Walkthrough

- Chapter 6. Formal Usability Inspections

- References

Frequently asked questions

Yes, you can cancel anytime from the Subscription tab in your account settings on the Perlego website. Your subscription will stay active until the end of your current billing period. Learn how to cancel your subscription

No, books cannot be downloaded as external files, such as PDFs, for use outside of Perlego. However, you can download books within the Perlego app for offline reading on mobile or tablet. Learn how to download books offline

Perlego offers two plans: Essential and Complete

- Essential is ideal for learners and professionals who enjoy exploring a wide range of subjects. Access the Essential Library with 800,000+ trusted titles and best-sellers across business, personal growth, and the humanities. Includes unlimited reading time and Standard Read Aloud voice.

- Complete: Perfect for advanced learners and researchers needing full, unrestricted access. Unlock 1.4M+ books across hundreds of subjects, including academic and specialized titles. The Complete Plan also includes advanced features like Premium Read Aloud and Research Assistant.

We are an online textbook subscription service, where you can get access to an entire online library for less than the price of a single book per month. With over 1 million books across 990+ topics, we’ve got you covered! Learn about our mission

Look out for the read-aloud symbol on your next book to see if you can listen to it. The read-aloud tool reads text aloud for you, highlighting the text as it is being read. You can pause it, speed it up and slow it down. Learn more about Read Aloud

Yes! You can use the Perlego app on both iOS and Android devices to read anytime, anywhere — even offline. Perfect for commutes or when you’re on the go.

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Yes, you can access User Interface Inspection Methods by Chauncey Wilson in PDF and/or ePUB format, as well as other popular books in Design & Interazione tra uomo e computer. We have over one million books available in our catalogue for you to explore.