1

Multisensor Data Fusion

David L. Hall and James Llinas

CONTENTS

1.1 Introduction

1.2 Multisensor Advantages

1.3 Military Applications

1.4 Nonmilitary Applications

1.5 Three Processing Architectures

1.6 Data Fusion Process Model

1.7 Assessment of the State-of-the-Art

1.8 Dirty Secrets in Data Fusion

1.9 Additional Information

References

1.1 Introduction

Over the past two decades, significant attention has been focused on multisensor data fusion for both military and nonmilitary applications. Data fusion techniques combine data from multiple sensors and related information to achieve more specific inferences than could be achieved by using a single, independent sensor. Data fusion refers to the combination of data from multiple sensors (either of the same or different types), whereas information fusion refers to the combination of data and information from sensors, human reports, databases, etc.

The concept of multisensor data fusion is hardly new. As humans and animals evolved, they developed the ability to use multiple senses to help them survive. For example, assessing the quality of an edible substance may not be possible using only the sense of vision; the combination of sight, touch, smell, and taste is far more effective. Similarly, when vision is limited by structures and vegetation, the sense of hearing can provide advanced warning of impending dangers. Thus, multisensory data fusion is naturally performed by animals and humans to assess more accurately the surrounding environment and to identify threats, thereby improving their chances of survival. Interestingly, recent applications of data fusion1 have combined data from an artificial nose and an artificial tongue using neural networks and fuzzy logic.

Although the concept of data fusion is not new, the emergence of new sensors, advanced processing techniques, improved processing hardware, and wideband communications has made real-time fusion of data increasingly viable. Just as the advent of symbolic processing computers (e.g., the Symbolics computer and the Lambda machine) in the early 1970s provided an impetus to artificial intelligence, the recent advances in computing and sensing have provided the capability to emulate, in hardware and software, the natural data fusion capabilities of humans and animals. Currently, data fusion systems are used extensively for target tracking, automated identification of targets, and limited automated reasoning applications. Data fusion technology has rapidly advanced from a loose collection of related techniques to an emerging true engineering discipline with standardized terminology, collection of robust mathematical techniques, and established system design principles. Indeed, the remaining chapters of this handbook provide an overview of these techniques, design principles, and example applications.

Applications for multisensor data fusion are widespread. Military applications include automated target recognition (e.g., for smart weapons), guidance for autonomous vehicles, remote sensing, battlefield surveillance, and automated threat recognition (e.g., identification-friend-foe-neutral [IFFN] systems). Military applications have also extended to condition monitoring of weapons and machinery, to monitoring of the health status of individual soldiers, and to assistance in logistics. Nonmilitary applications include monitoring of manufacturing processes, condition-based maintenance of complex machinery, environmental monitoring, robotics, and medical applications.

Techniques to combine or fuse data are drawn from a diverse set of more traditional disciplines, including digital signal processing, statistical estimation, control theory, artificial intelligence, and classic numerical methods. Historically, data fusion methods were developed primarily for military applications. However, in recent years, these methods have been applied to civilian applications and a bidirectional transfer of technology has begun.

1.2 Multisensor Advantages

Fused data from multiple sensors provide several advantages over data from a single sensor. First, if several identical sensors are used (e.g., identical radars tracking a moving object), combining the observations would result in an improved estimate of the target position and velocity. A statistical advantage is gained by adding the N independent observations (e.g., the estimate of the target location or velocity is improved by a factor proportional to N1/2), assuming the data are combined in an optimal manner. The same result could also be obtained by combining N observations from an individual sensor.

The second advantage is that using the relative placement or motion of multiple sensors the observation process can be improved. For example, two sensors that measure angular directions to an object can be coordinated to determine the position of the object by triangulation. This technique is used in surveying and for commercial navigation (e.g., VHF omni-directional range [VOR]). Similarly, sensors, one moving in a known way with respect to another, can be used to measure instantaneously an object’s position and velocity with respect to the observing sensors.

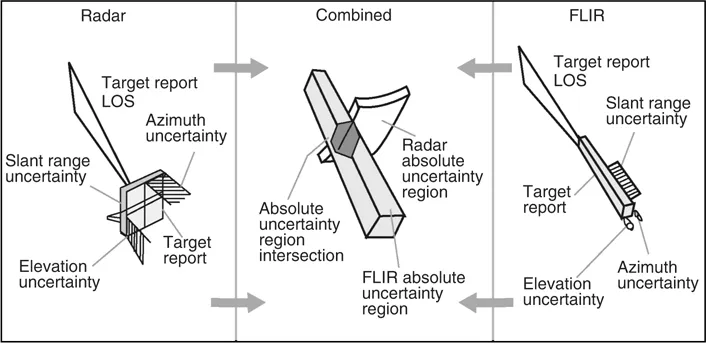

The third advantage gained using multiple sensors is improved observability. Broadening the baseline of physical observables can result in significant improvements. Figure 1.1 provides a simple example of a moving object, such as an aircraft, that is observed by both a pulsed radar and a forward-looking infrared (FLIR) imaging sensor. The radar can accurately determine the aircraft’s range but has a limited ability to determine the angular direction of the aircraft. By contrast, the infrared imaging sensor can accurately determine the aircraft’s angular direction but cannot measure the range. If these two observations are correctly associated (as shown in Figure 1.1), the combination of the two sensors provides a better determination of location than could be obtained by either of the two independent sensors. This results in a reduced error region, as shown in the fused or combined location estimate. A similar effect may be obtained in determining the identity of an object on the basis of the observations of an object’s attributes. For example, there is evidence that bats identify their prey by a combination of factors, including size, texture (based on acoustic signature), and kinematic behavior. Interestingly, just as humans may use spoofing techniques to confuse sensor systems, some moths confuse bats by emitting sounds similar to those emitted by the bat closing in on prey (see http://www.desertmuseum.org/books/nhsd_moths.html—downloaded on October 4, 2007).

1.3 Military Applications

The Department of Defense (DoD) community focuses on problems involving the location, characterization, and identification of dynamic entities such as emitters, platforms, weapons, and military units. These dynamic data are often termed as order-of-battle database or order-of-battle display (if superimposed on a map display). Beyond achieving an order-of-battle database, DoD users seek higher-level inferences about the enemy situation (e.g., the relationships among entities and their relationships with the environment and higher-level enemy organizations). Examples of DoD-related applications include ocean surveillance, air-to-air defense, battlefield intelligence, surveillance and target acquisition, and strategic warning and defense. Each of these military applications involves a particular focus, a sensor suite, a desired set of inferences, and a unique set of challenges, as shown in Table 1.1.

Ocean surveillance systems are designed to detect, track, and identify ocean-based targets and events. Examples include antisubmarine warfare systems to support navy tactical fleet operations and automated systems to guide autonomous vehicles. Sensor suites can include radar, sonar, electronic intelligence (ELINT), observation of communications traffic, infrared, and synthetic aperture radar (SAR) observations. The surveillance volume for ocean surveillance may encompass hundreds of nautical miles and focus on air, surface, and subsurface targets. Multiple surveillance platforms can be involved and numerous targets can be tracked. Challenges to ocean surveillance involve the large surveillance volume, the combination of targets and sensors, and the complex signal propagation environment—especially for underwater sonar sensing. An example of an ocean surveillance system is shown in Figure 1.2.

Air-to-air and surface-to-air defense systems have been developed by the military to detect, track, and identify aircraft and antiaircraft weapons and sensors. These defense sys...