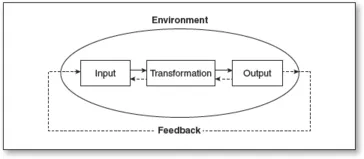

Inputs.

Inputs are resources the program takes in from the environment. They may include funding, technology, equipment, facilities, personnel, and clients. Inputs form and sustain a program, but they cannot work effectively without systematic organization. Usually, a program requires an implementing organization that can secure and manage its inputs.

Transformation.

A program converts inputs into outputs through transformation. This process, which begins with the initial implementation of the treatment/intervention prescribed by a program, can be described as the stage during which implementers provide services to clients. For example, the implementation of a new curriculum in a school may mean the process of teachers teaching students new subject material in accordance with existing instructional rules and administrative guidelines. Transformation also includes those sequential events necessary to achieve desirable outputs. For example, to increase students’ math and reading scores, an education program may need to first boost students’ motivation to learn.

Environment.

The environment consists of any factors that, despite lying outside a program’s boundaries, can nevertheless either foster or constrain that program’s implementation. Such factors may include social norms, political structures, the economy, funding agencies, interest groups, and concerned citizens. Because an intervention program is an open system, it depends on the environment for its inputs: clients, personnel, money, and so on. Furthermore, the continuation of a program often depends on how the general environment reacts to program outputs. Are the outputs valuable? Are they acceptable? For example, if the staff of a day care program is suspected of abusing children, the environment would find that output unacceptable. Parents would immediately remove their children from the program, law enforcement might press criminal charges, and the community might boycott the day care center. Finally, the effectiveness of an open system, such as an intervention program, is influenced by external factors such as cultural norms and economic, social, and political conditions. A contrasting system may be illustrative: In a biological system, the use of a medicine to cure an illness is unlikely to be directly influenced by external factors such as race, culture, social norms, or poverty.

Feedback.

So that decision makers can maintain success and correct any problems, an open system requires information about inputs and outputs, transformation, and the environment’s responses to these components. This feedback is the basis of program evaluation. Decision makers need information to gauge whether inputs are adequate and organized, interventions are implemented appropriately, target groups are being reached, and clients are receiving quality services. Feedback is also critical to evaluating whether outputs are in alignment with the program’s goals and are meeting the expectations of stakeholders. Stakeholders are people who have a vested interest in a program and are likely be affected by evaluation results; they include funding agencies, decision makers, clients, program managers, and staff. Without feedback, a system is bound to deteriorate and eventually die. Insightful program evaluation helps to both sustain a program and prevent it from failing. The action of feedback within the system is indicated by the dotted lines in Figure 1.1.

To survive and thrive within an open system, a program must perform at least two major functions. First, internally, it must ensure the smooth transformation of inputs into desirable outcomes. For example, an education program would experience negative side effects if faced with disruptions like high staff turnover, excessive student absenteeism, or insufficient textbooks. Second, externally, a program must continuously interact with its environment in order to obtain the resources and support necessary for its survival. That same education program would become quite vulnerable if support from parents and school administrators disappeared.

Thus, because programs are subject to the influence of their environment, every program is an open system. The characteristics of an open system can also be identified in any given policy, which is a concept closely related to that of a program. Although policies may seem grander than programs—in terms of the envisioned magnitude of an intervention, the number of people affected, and the legislative process—the principles and issues this book addresses are relevant to both. Throughout the rest of the book, the word program may be understood to mean program or policy.

Based upon the above discussion, this book defines program evaluation as the process of systematically gathering empirical data and contextual information about an intervention program—specifically answers to what, who, how, whether, and why questions that will assist in assessing a program’s planning, implementation, and/or effectiveness. This definition suggests many potential questions for evaluators to ask during an evaluation: The “what” questions include those such as, what are the intervention, outcomes, and other major components? The “who” questions might be, who are the implementers and who are the target clients? The “how” questions might include, how is the program implemented? The “whether” questions might ask whether the program plan is sound, the implementation adequate, and the intervention effective. And the “why” questions could be, why does the program work or not work? One of the essential tasks for evaluators is to figure out which questions are important and interesting to stakeholders and which evaluation approaches are available for evaluators to use in answering the questions. These topics will be systematically discussed in Chapter 2. The purpose of program evaluation is to make the program accountable to its funding agencies, decision makers, or other stakeholders and to enable program management and implementers to improve the program’s delivery of acceptable outcomes.