![]()

CHAPTER 1

A New Model for Results

Training That Delivers Results aims to change learning organizations and their leaders by offering a strategic model that focuses on achieving desired business results. The strategic instructional design process described in this book produces observable, measurable, and repeatable training programs that deliver results. Observable means that you are able to clearly see the intended behaviors of and outcomes achieved by your performers. Measurable means that you are able to compare the results of your learner's performance against a predetermined standard. The system is efficient and predictable yet still offers room for flexibility and creativity.

The Handshaw Instructional Design Model applies principles of both performance improvement and instructional design to a variety of learning situations. Achieving business-focused outcomes begins by identifying both learning and nonlearning solutions to performance problems. Instructional design practiced this way doesn't cost time and money, it saves time and money.

PERFORMANCE IMPROVEMENT AND INSTRUCTIONAL DESIGN

Some people in our profession consider themselves instructional designers; others consider themselves to be performance consultants. An effective way to deliver value is by integrating the skills of both performance improvement and instructional design. Adding the steps of performance consulting from the Handshaw Instructional Design Model enables you to link learning goals to strategic business goals.

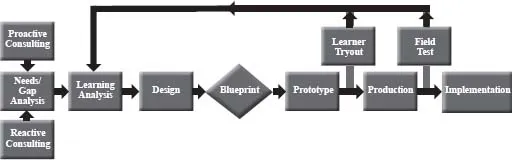

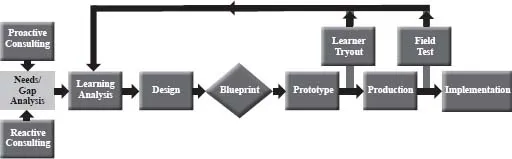

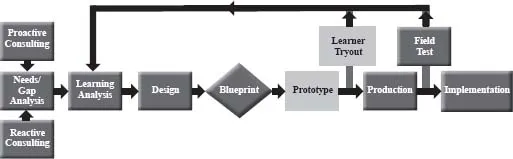

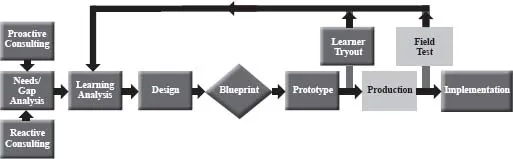

A New Model

The Handshaw Instructional Design Model integrates the principles of performance improvement with those of classic instructional design (see Figure 1.1). Although many of the parts of this model are not new, the concept of combining elements of performance improvement and instructional design into one straightforward, easy-to-use model is new. If your instructional design model is not saving you time and delivering business results, then this may be an approach to consider. By identifying both learning and nonlearning solutions up front, designers are better able to spend their time and resources delivering solutions that solve the right performance challenges. I have spent more than thirty years working with my team and our clients to refine our model and its application in a wide variety of situations. The following section describes the basics for applying the model.

FIGURE 1.1 Handshaw Instructional Design Model

THE HANDSHAW INSTRUCTIONAL DESIGN MODEL EXPLAINED

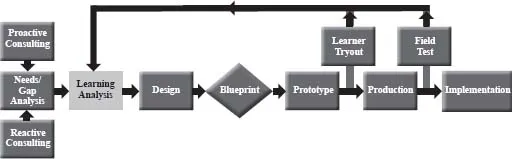

Proactive Performance Consulting

You can establish a consulting relationship with your client through proactive performance consulting (see Figure 1.2). The purpose of establishing this relationship is to ensure that the training you develop aligns with your client's business goals. You can develop a “trusted partner” relationship with your client by having regular proactive consulting meetings. These meetings are informal, conversational, and simple to conduct. The eight well-tested principles of the successful proactive consulting meeting are detailed in Chapter 2.

Reactive Performance Consulting

Most instructional designers are accustomed to meeting with their clients to react to training requests. These meetings present an opportunity to reframe the training request in order to align the training need with the business need. The reactive consulting meetings position you to take responsibility for results and outcomes of a learning program and help you transition from training “order taker” to trusted partner. (See Figure 1.3.)

FIGURE 1.2 Proactive Performance Consulting Phase

FIGURE 1.3 Reactive Performance Consulting Phase

Needs/Gap Analysis

The gap analysis is a frequently used type of needs analysis. It may be used after a successful proactive or reactive meeting in which appropriate learning needs have been identified. The first gap you identify is the difference between the current and the expected business outcomes. Next, determine the gap between the current performance and the performance required to achieve the business result. The exact level of performance required to close the gap is defined during the Analysis and Design phases in the model. Armed with this information, you can identify both learning and nonlearning solutions to help you and your client close both the performance and the business gaps. (See Figure 1.4.)

FIGURE 1.4 Needs/Gap Analysis Phase

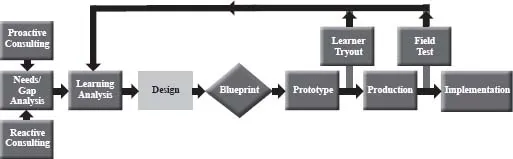

Learning Analysis

Once you have identified the skills gap, the Learning Analysis phase begins (Figure 1.5). The first step, a task analysis, is essentially a snapshot of the perfect performer engaging in a work task in a way that achieves the desired business result. Developing a task analysis verified by subject matter experts (SMEs) and stakeholders before beginning training design is an essential step. It is cost effective and helps you avoid project delays and cost overruns.

There are three other types of analysis that help you make decisions later, during the Design phase. You can conduct an audience analysis to find out what your learners already know about the training program's content. Collecting helpful demographic information about the intended audience is also part of this analysis. The audience analysis doesn't require a lot of time and can be reused when conducting training for the same audience in the future.

Many organizations overlook the importance of culture. Culture can be one of the greatest enhancers or strongest barriers to a successful learning event. Conduct a learning culture analysis to leverage your organization's culture to impact the ultimate, lasting success of your learning design.

FIGURE 1.5 Learning Analysis Phase

The last piece of analysis you should complete is called a delivery systems analysis. This may be more useful for outside service providers than for internal practitioners, but conducting this type of analysis might help you avoid the really embarrassing situation of specifying the use of an unpopular or poorly performing learning delivery system. A delivery systems analysis can also be revised and reused for future projects.

Design

The Design phase (Figure 1.6) begins with the development of performance objectives (you can substitute learning objectives if you prefer that term). Successful selection and design of measurement instruments begins with well-written objectives. Although I am a proponent of flexibility, I don't recommend it here. You will reap the benefits of good objectives when measuring learning outcomes—for example, when you are required to measure a learner's mastery of performance objectives.

It also makes sense to select and design your testing instruments once you have agreed upon objectives. When designing for results, you should limit your test design to criterion-referenced tests (CRTs) only. The criteria you reference in this case are the performance objectives. The payback for following these steps will be apparent when you define your instructional strategy. You can eliminate misunderstandings by defining a measurement strategy before you try to select an instructional strategy.

FIGURE 1.6 Design Phase

FIGURE 1.7 Blueprint Phase

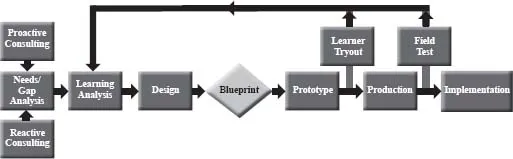

Blueprint

You won't find the blueprint meeting in any traditional instructional design model such as ADDIE (Analysis, Design, Develop, Implement, and Evaluate). This tool is essential to the development of a performance partnership with your clients. A blueprint meeting is a forum that allows you to present your measurement strategy and instructional strategy to your stakeholders, subject matter experts, and others on the design team. The meeting can be held in person or virtually and is ideal for answering questions, clarifying misunderstandings, and gaining consensus. If you really want to be a trusted partner, invest two or three hours in this meeting. (See Figure 1.7.)

FIGURE 1.8 Prototype and Learner Tryout Phases

Prototype and Learner Tryout

Another useful tool for preventing “do-overs” and gaining consensus as a trusted partner is the simple step of developing and testing a prototype. First, select a training module for your prototype that is a fair representation of your measurement and instructional strategies. Then, before you go too far in developing the rest of the course, test your prototype with a small group of sample learners in a learner tryout. Use this structured test to yield valuable data for verifying your chosen strategies. You may discover differences of opinion with your subject matter experts or even among designers on your own team. I've found that observing sample learners during a learner tryout always uncovers the correct approach. The feedback loops in the model allow you to go back and revise the analysis and subsequent design steps. (See Figure 1.8.)

Production and Field Test

The Production phase is the largest and costliest of all the phases in the model (see Figure 1.9). It involves development of content and testing instruments. Because this phase is so time consuming, it is important to ensure that the other phases are done correctly in order to avoid rework.

FIGURE 1.9 Production and Field Test Phases

The evaluation carried out in this phase is called a field test (some of our clients prefer the term pilot test). Whatever label you use, you need to observe sample learners as they test the entire learning solution under the exact conditions they will experience during implementation. Testing your course with a controlled audience provides a measure of reassurance to you and your client that the rollout will go smoothly. This is a good feeling to have if your course is going to be released to a large audience in a global organization.

Implementation

If you've carefully followed the recommended steps in the model, the Implementation phase...