![]()

Part 1. Foundations

What is machine learning? What is the game of Go, and why was it such an important milestone for game AI? How is teaching a computer to play Go different from teaching it to play chess or checkers?

In this part, we answer all those questions, and you’ll build a flexible Go game logic library that will provide a foundation for the rest of the book.

![]()

1 Toward deep learning: a machine-learning introduction

This chapter covers:

- Machine learning and its differences from traditional programming

- Problems that can and can’t be solved with machine learning

- Machine learning’s relationship to artificial intelligence

- The structure of a machine-learning system

- Disciplines of machine learning

As long as computers have existed, programmers have been interested in artificial intelligence (AI): implementing human-like behavior on a computer. Games have long been a popular subject for AI researchers. During the personal computer era, AIs have overtaken humans at checkers, backgammon, chess, and almost all classic board games. But the ancient strategy game Go remained stubbornly out of reach for computers for decades. Then in 2016, Google DeepMind’s AlphaGo AI challenged 14-time world champion Lee Sedol and won four out of five games. The next revision of AlphaGo was completely out of reach for human players: it won 60 straight games, taking down just about every notable Go player in the process.

AlphaGo’s breakthrough was enhancing classical AI algorithms with machine learning. More specifically, AlphaGo used modern techniques known as deep learning—algorithms that can organize raw data into useful layers of abstraction. These techniques aren’t limited to games at all. You’ll also find deep learning in applications for identifying images, understanding speech, translating natural languages, and guiding robots. Mastering the foundations of deep learning will equip you to understand how all these applications work.

Why write a whole book about computer Go? You might suspect that the authors are die-hard Go nuts—OK, guilty as charged. But the real reason to study Go, as opposed to chess or backgammon, is that a strong Go AI requires deep learning. A top-tier chess engine such as Stockfish is full of chess-specific logic; you need a certain amount of knowledge about the game to write something like that. With deep learning, you can teach a computer to imitate strong Go players, even if you don’t understand what they’re doing. And that’s a powerful technique that opens up all kinds of applications, both in games and in the real world.

Chess and checkers AIs are designed around reading out the game further and more accurately than human players can. There are two problems with applying this technique to Go. First, you can’t read far ahead, because the game has too many moves to consider. Second, even if you could read ahead, you don’t know how to evaluate whether the result is good. It turns out that deep learning is the key to unlocking both problems.

This book provides a practical introduction to deep learning by covering the techniques that powered AlphaGo. You don’t need to study the game of Go in much detail to do this; instead, you’ll look at the general principles of the way a machine can learn. This chapter introduces machine learning and the kinds of problems it can (and can’t) solve. You’ll work through examples that illustrate the major branches of machine learning, and see how deep learning has brought machine learning into new domains.

1.1. What is machine learning?

Consider the task of identifying a photo of a friend. This is effortless for most people, even if the photo is badly lit, or your friend got a haircut or is wearing a new shirt. But suppose you want to program a computer to do the same thing. Where would you even begin? This is the kind of problem that machine learning can solve.

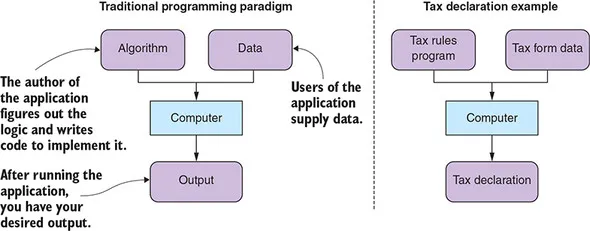

Traditionally, computer programming is about applying clear rules to structured data. A human developer programs a computer to execute a set of instructions on data, and out comes the desired result, as shown in figure 1.1. Think of a tax form: every box has a well-defined meaning, and detailed rules indicate how to make various calculations from them. Depending on where you live, these rules may be extremely complicated. It’s easy for people to make a mistake here, but this is exactly the kind of task that computer programs excel at.

Figure 1.1. The standard programming paradigm that most software developers are familiar with. The developer identifies the algorithm and implements the code; the users supply the data.

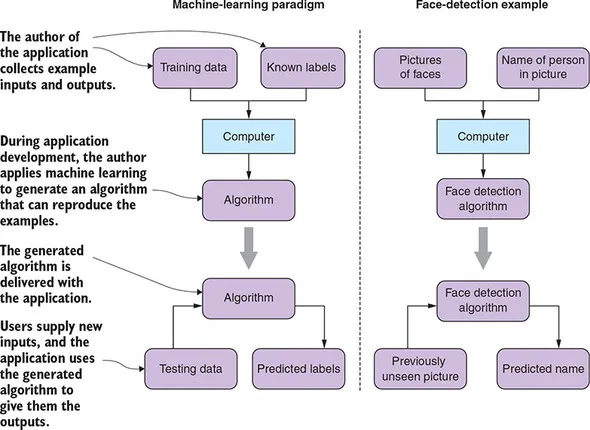

In contrast to the traditional programming paradigm, machine learning is a family of techniques for inferring a program or algorithm from example data, rather than implementing it directly. So, with machine learning, you still feed your computer data, but instead of imposing instructions and expecting output, you provide the expected output and let the machine find an algorithm by itself.

To build a computer program that can identify who’s in a photo, you can apply an algorithm that analyzes a large collection of images of your friend and generates a function that matches them. If you do this correctly, the generated function will also match new photos that you’ve never seen before. Of course, the program will have no knowledge of its purpose; all it can do is identify things that are similar to the original images you fed it.

In this situation, you call the images you provide the machine training data, and the names of the person on the picture labels. After you’ve trained an algorithm for your purpose, you can use it to predict labels on new data to test it. Figure 1.2 displays this example alongside a schema of the machine-learning paradigm.

Figure 1.2. The machine-learning paradigm: during development, you generate an algorithm from a data set, and then incorporate that into your final application.

Machine learning comes in when rules aren’t clear; it can solve problems of the “I’ll know it when I see it” variety. Instead of programming the function directly, you provide data that indicates what the function should do, and then methodically generate a function that matches your data.

In practice, you usually combine machine learning with traditional programming to build a useful application. For our face-detection app, you have to instruct the computer on how to find, load, and transform the example images before you can apply a machine-learning algorithm. Beyond that, you might use hand-rolled heuristics to separate headshots from photos of sunsets and latte art; then you can apply machine learning to put names to faces. Often a mixture of traditional programming techniques and advanced machine-learning algorithms will be superior to either one alone.

1.1.1. How does machine learning relate to AI?

Artificial intelligence, in the broadest sense, refers to any technique for making computers imitate human behavior. AI includes a huge range of techniques, including the following:

- Logic production systems, which apply formal logic to evaluate statements

- Expert systems, in which programmers try to directly encode human knowledge into software

- Fuzzy logic, which defines algorithms to help computers process imprecise statements

These sorts of rules-based techniques are sometimes called classical AI or GOFAI (good old-fashioned AI).

Machine learning is just one of many fields in AI, but today it’s arguably the most successful one. In particular, the subfield of deep learning is behind some of the most exciting breakthroughs in AI, including tasks that eluded researchers for decades. In classical AI, researchers would study human behavior and try to encode rules that match it. Machine learning and deep learning flip the problem on its head: now you collect examples of human behavior and apply mathematical and statistical techniques to extract the rules.

Deep learning is so ubiquitous that some people in the community use AI and deep learning interchangeably. For clarity, we’ll use AI to refer to the general problem of imitating human behavior with computers, and machine learning or deep learning to refer to mathematical techniques for extracting algorithms from examples.

1.1.2. What you can and can’t do with machine learning

Machine learning is a specialized technique. You wouldn’t use machine learning to update database records or render a user interface. Traditional programming should be preferred in the following situations:

- Traditional algorithms solve the problem directly. If you can directly write code to solve a problem, it’ll be easier to understand, maintain, test, and debug.

- You expect perfect accuracy. All complex software contains bugs. But in traditional software engineering, you expect to methodically identify and fix bugs. That’s not always possible with machine learning. You can improve machine-learning systems, but focusing too much on a specific error often makes the overall system worse.

- Simple heuristics work well. If you can implement a rule that’s good enough with just a few lines of code, do so and be happy. A simple heuristic, implemented clearly, will be easy to understand and maintain. Functions that are implemented with machine learning are opaque and require a separate training process to update. (On the other hand,...