Untangle your web scraping complexities and access web data with ease using Python scriptsAbout This Book• Hands-on recipes for advancing your web scraping skills to expert level.• One-Stop Solution Guide to address complex and challenging web scraping tasks using Python.• Understand the web page structure and collect meaningful data from the website with ease Who This Book Is ForThis book is ideal for Python programmers, web administrators, security professionals or someone who wants to perform web analytics would find this book relevant and useful. Familiarity with Python and basic understanding of web scraping would be useful to take full advantage of this book.What You Will Learn• Use a wide variety of tools to scrape any website and data—including BeautifulSoup, Scrapy, Selenium, and many more• Master expression languages such as XPath, CSS, and regular expressions to extract web data• Deal with scraping traps such as hidden form fields, throttling, pagination, and different status codes• Build robust scraping pipelines with SQS and RabbitMQ• Scrape assets such as images media and know what to do when Scraper fails to run• Explore ETL techniques of build a customized crawler, parser, and convert structured and unstructured data from websites• Deploy and run your scraper-as-aservice in AWS Elastic Container ServiceIn DetailPython Web Scraping Cookbook is a solution-focused book that will teach you techniques to develop high-performance scrapers and deal with crawlers, sitemaps, forms automation, Ajax-based sites, caches, and more.You'll explore a number of real-world scenarios where every part of the development/product life cycle will be fully covered. You will not only develop the skills to design and develop reliable, performance data flows, but also deploy your codebase to an AWS. If you are involved in software engineering, product development, or data mining (or are interested in building data-driven products), you will find this book useful as each recipe has a clear purpose and objective.Right from extracting data from the websites to writing a sophisticated web crawler, the book's independent recipes will be a godsend on the job. This book covers Python libraries, requests, and BeautifulSoup. You will learn about crawling, web spidering, working with AJAX websites, paginated items, and more. You will also learn to tackle problems such as 403 errors, working with proxy, scraping images, LXML, and more.By the end of this book, you will be able to scrape websites more efficiently and to be able to deploy and operate your scraper in the cloud.Style and approachThis book is a rich collection of recipes that will come in handy when you are scraping a website using Python.Addressing your common and not-so-common pain points while scraping website, this is a book that you must have on the shelf.

- 364 pages

- English

- ePUB (mobile friendly)

- Available on iOS & Android

eBook - ePub

Python Web Scraping Cookbook

About this book

Trusted by 375,005 students

Access to over 1 million titles for a fair monthly price.

Study more efficiently using our study tools.

Information

Making the Scraper as a Service Real

In this chapter, we will cover:

- Creating and configuring an Elastic Cloud trial account

- Accessing the Elastic Cloud cluster with curl

- Connecting to the Elastic Cloud cluster with Python

- Performing an Elasticsearch query with the Python API

- Using Elasticsearch to query for jobs with specific skills

- Modifying the API to search for jobs by skill

- Storing configuration in the environment

Creating an AWS IAM user and a key pair for ECS - Configuring Docker to authenticate with ECR

- Pushing containers into ECR

- Creating an ECS cluster

- Creating a task to run our containers

- Starting and accessing the containers in AWS

Introduction

In this chapter, we will first add a feature to search job listings using Elasticsearch and extend the API for this capability. Then will move Elasticsearch functions to Elastic Cloud, a first step in cloud-enabling our cloud based scraper. Then, we will move our Docker containers to Amazon Elastic Container Repository (ECR), and finally run our containers (and scraper) in Amazon Elastic Container Service (ECS).

Creating and configuring an Elastic Cloud trial account

In this recipe we will create and configure an Elastic Cloud trial account so that we can use Elasticsearch as a hosted service. Elastic Cloud is a cloud service offered by the creators of Elasticsearch, and provides a completely managed implementation of Elasticsearch.

While we have examined putting Elasticsearch in a Docker container, actually running a container with Elasticsearch within AWS is very difficult due to a number of memory requirements and other system configurations that are complicated to get working within ECS. Therefore, for a cloud solution, we will use Elastic Cloud.

How to do it

We'll proceed with the recipe as follows:

- Open your browser and navigate to https://www.elastic.co/cloud/as-a-service/signup. You will see a page similar to the following:

The Elastic Cloud signup page

- Enter your email and press the Start Free Trial button. When the email arrives, verify yourself. You will be taken to a page to create your cluster:

Cluster creation page

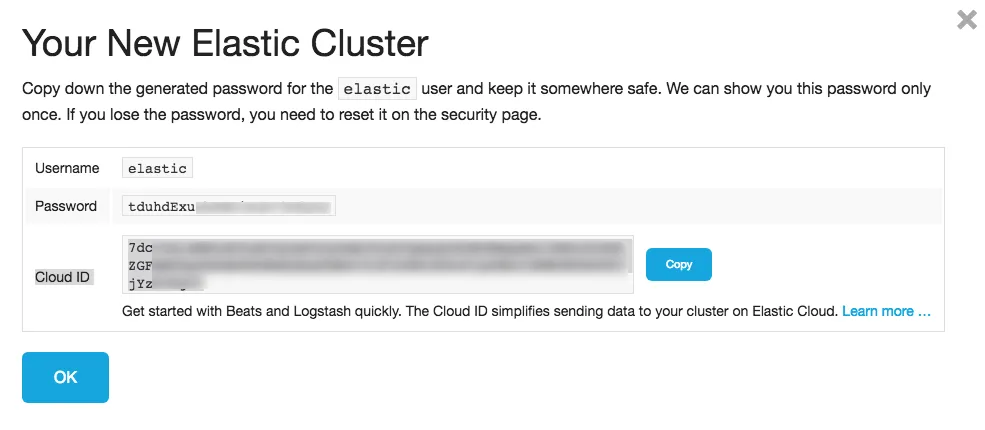

- I'll be using AWS (not Google) in the Oregon (us-west-2) region in other examples, so I'll pick both of those for this cluster. You can pick a cloud and region that works for you. You can leave the other options as it is, and just press create. You will then be presented with your username and password. Jot those down. The following screenshot gives an idea of how it displays the username and password:

The credentials info for the Elastic Cloud account

We won't use the Cloud ID in any recipes.

- Next, you will be presented with your endpoints. The Elasticsearch URL is what's important to us:

- And that's it - you are ready to go (at least for 14 days)!

Accessing the Elastic Cloud cluster with curl

Elasticsearch is fundamentally accessed via a REST API. Elastic Cloud is no different and is actually an identical API. We just need to be able to know how to construct the URL properly to connect. Let's look at that.

How to do it

We proceed with the recipe as follows:

- When you signed up for Elastic Cloud, you were given various endpoints and variables, such as username and password. The URL was similar to the following:

https://<account-id>.us-west-2.aws.found.io:9243

Depending on the cloud and region, the rest of the domain name, as well as the port, may differ.

- We'll use a slight variant of the following URL to communicate and authenticate with Elastic Cloud:

https://<username>:<password>@<account-id>.us-west-2.aws.found.io:9243

- Currently, mine is (it will be disabled by the time you read this):

https://elastic:tduhdExunhEWPjSuH73O6yLS@d7c72d3327076cc4daf5528103c46a27.us-west-2.aws.found.io:9243

- Basic authentication and connectivity can be checked with curl:

$ curl https://elastic:tduhdExunhEWPjSuH73O6yLS@7dc72d3327076cc4daf5528103c46a27.us-west-2.aws.found.io:9243

{

"name": "instance-0000000001",

"cluster_name": "7dc72d3327076cc4daf5528103c46a27",

"cluster_uuid": "g9UMPEo-QRaZdIlgmOA7hg",

"version": {

"number": "6.1.1",

"build_hash": "bd92e7f",

"build_date": "2017-12-17T20:23:25.338Z",

"build_snapshot": false,

"lucene_version": "7.1.0",

"minimum_wire_compatibility_version": "5.6.0",

"minimum_index_compatibility_version": "5.0.0"

},

"tagline": "You Know, for Search"

}

Michaels-iMac-2:pems michaelheydt$

And we are up and talking!

Connecting to the Elastic Cloud cluster with Python

Now let's look at how to connect to Elastic Cloud using the Elasticsearch Python library.

Getting ready

The code for this recipe is in the 11/01/elasticcloud_starwars.py script. This script will scrape Star Wars character data from the swapi.co API/website and put it into the Elastic Cloud.

How to do it

We proceed with the recipe as follows:

- Execute the file as a Python script:

$ python elasticcloud_starwars.py

- This will loop through up to 20 characters and drop them into the sw index with a document type of people. The code is straightforward (replace the URL with yours):

from elasticsearch import Elasticsearch

import requests

import json

if __name__ == '__main__':

es = Elasticsearch(

[

"https://elastic:tdu...

Table of contents

- Title Page

- Copyright and Credits

- Contributors

- Packt Upsell

- Preface

- Getting Started with Scraping

- Data Acquisition and Extraction

- Processing Data

- Working with Images, Audio, and other Assets

- Scraping - Code of Conduct

- Scraping Challenges and Solutions

- Text Wrangling and Analysis

- Searching, Mining and Visualizing Data

- Creating a Simple Data API

- Creating Scraper Microservices with Docker

- Making the Scraper as a Service Real

- Other Books You May Enjoy

Frequently asked questions

Yes, you can cancel anytime from the Subscription tab in your account settings on the Perlego website. Your subscription will stay active until the end of your current billing period. Learn how to cancel your subscription

No, books cannot be downloaded as external files, such as PDFs, for use outside of Perlego. However, you can download books within the Perlego app for offline reading on mobile or tablet. Learn how to download books offline

Perlego offers two plans: Essential and Complete

- Essential is ideal for learners and professionals who enjoy exploring a wide range of subjects. Access the Essential Library with 800,000+ trusted titles and best-sellers across business, personal growth, and the humanities. Includes unlimited reading time and Standard Read Aloud voice.

- Complete: Perfect for advanced learners and researchers needing full, unrestricted access. Unlock 1.4M+ books across hundreds of subjects, including academic and specialized titles. The Complete Plan also includes advanced features like Premium Read Aloud and Research Assistant.

We are an online textbook subscription service, where you can get access to an entire online library for less than the price of a single book per month. With over 1 million books across 990+ topics, we’ve got you covered! Learn about our mission

Look out for the read-aloud symbol on your next book to see if you can listen to it. The read-aloud tool reads text aloud for you, highlighting the text as it is being read. You can pause it, speed it up and slow it down. Learn more about Read Aloud

Yes! You can use the Perlego app on both iOS and Android devices to read anytime, anywhere — even offline. Perfect for commutes or when you’re on the go.

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Please note we cannot support devices running on iOS 13 and Android 7 or earlier. Learn more about using the app

Yes, you can access Python Web Scraping Cookbook by Michael Heydt in PDF and/or ePUB format, as well as other popular books in Computer Science & Data Processing. We have over one million books available in our catalogue for you to explore.