Apache Kafka

What Is Apache Kafka?

Apache Kafka is an open-source distributed event streaming and stream processing platform originally developed by LinkedIn (Jason Bell et al., 2020). It functions as a high-throughput, low-latency message-oriented middleware (MOM) designed to handle real-time data feeds (Tomcy John et al., 2017). Architected as a distributed transaction log, Kafka decouples data sources from consumers, providing a unified platform for managing high-velocity and high-volume data streams across enterprise infrastructures (Tomcy John et al., 2017)(Raúl Estrada et al., 2017).

Architecture and Core Components of Apache Kafka

The Kafka architecture consists of brokers, which are server processes that form a cluster (Raúl Estrada et al., 2017). Data is organized into topics, which act as queues where producers publish messages and consumers subscribe to read them (Jason Bell et al., 2020)(Jyotiswarup Raiturkar et al., 2018). Topics are further divided into partitions—ordered, immutable sequences of records appended to a commit log (Raúl Estrada et al., 2017). Each message within a partition is identified by a unique numerical offset, ensuring sequential data integrity (Alexander Shuiskov et al., 2022)(Tomasz Lelek et al., 2022).

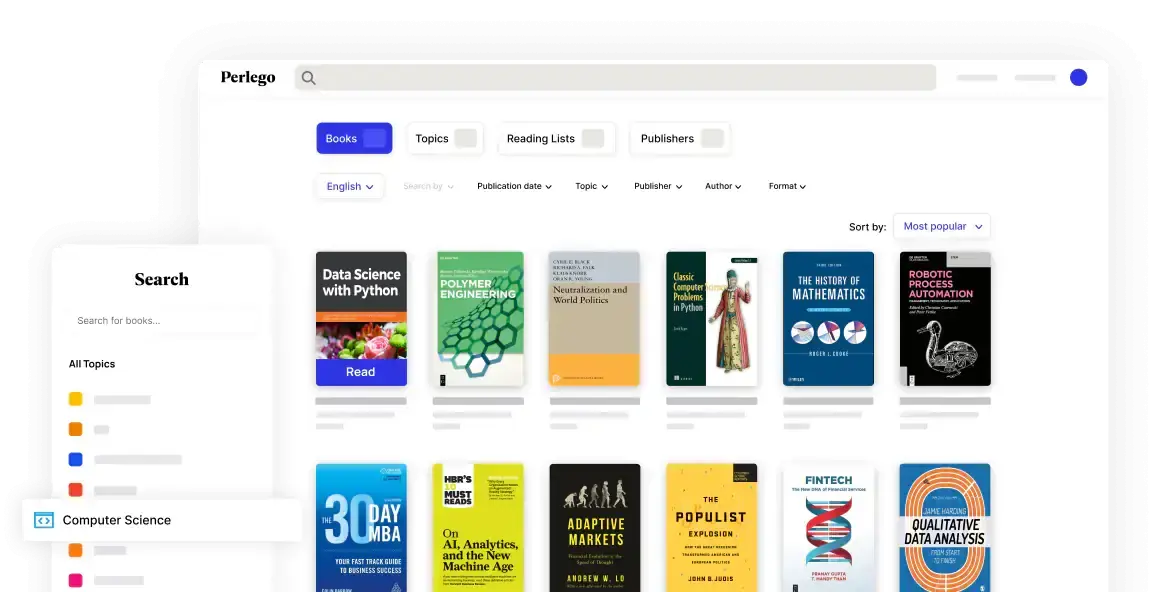

Your digital library for Apache Kafka and Computer Science

Access a world of academic knowledge with tools designed to simplify your study and research.- Unlimited reading from 1.4M+ books

- Browse through 900+ topics and subtopics

- Read anywhere with the Perlego app

Operational Purpose and Functional Role

Kafka serves as a real-time publish-subscribe solution that isolates producers from consumers, ensuring that producers are not blocked by downstream processing (Raúl Estrada et al., 2017). It provides durable distribution of messages across a cluster using In-Sync Replicas (ISRs), where a leader node handles requests and followers replicate state (Jyotiswarup Raiturkar et al., 2018). Beyond simple messaging, the Kafka Streams API allows for complex data transformations, representing unbound, continuously updating data through a topology of processors (Sinchan Banerjee et al., 2022)(Joshua Garverick et al., 2023).

Scalability and Delivery Semantics

Kafka is highly scalable, allowing topics to be spread across multiple machines to support parallel processing and high write/read throughput (Alexander Shuiskov et al., 2022)(Jyotiswarup Raiturkar et al., 2018). It offers flexible durability through configurable retention periods and supports various delivery semantics, including at-least-once and exactly-once delivery (Raúl Estrada et al., 2017). This fault-tolerant design makes it a critical component in modern data lakes and IoT architectures, acting as a reliable buffer for backend processors like Apache Spark (Tomcy John et al., 2017)(Chandrasekar Vuppalapati et al., 2019).